Last week I had an interesting meeting with GLOBALFOUNDRIES executives Kevin Meyer and Mojy Chian. It certainly seems that GFI has turned a corner! I will be in Dresden next week for DATE 2012 and will also visit the GFI Fab there. 28nm and 20nm are on track so expect an aggressive implementation plan from GFI this year.

Continue reading “Designing ARM Powered High Performance SoCs on 28nm and 20nm!”

OpenAccess DB – Productivity and Beyond!

As I have been watching the developments in EDA and Semiconductor industry, it is apparent that we remain fragmented unless pushed to adopt a common standard mostly due to business reasons. Foundries are dictating on the rules to be followed by designs, thereby EDA tools incorporating them. Also, design companies needed to work with tools from different vendors. As a result various exchange formats appeared at various levels – layer, device, block, design and so on; first to define and satisfy the rule and second to exchange the information between different tools. Yet, the industry needed a common database for tighter integration and interoperability between various tools, eliminating data translation between formats. OpenAccess is an open standard database, owned and distributed by SI2 ; available at least after about 8-10 years of wait.

There are multiple advantages of using OpenAccess database. I would not go into the details of its data exchange, capacity and performance benefits, but how it tremendously improves productivity in terms of design and tool development and robustness of the flow. SI2 provides database along with its access APIs; so any tool developed in-house or by 3[SUP]rd[/SUP] party on this database can be tightly integrated, thereby enabling a robust design flow based on multiple tools but working on the same database. This also eliminates multiple data translations through various formats which in turn eliminate unwanted errors and troubleshooting overheads. While talking to Brady Logan (Director of Business Development at SpringSoft), he showed me a demo of Laker environment. This reflects true use and advantage of OpenAccess.

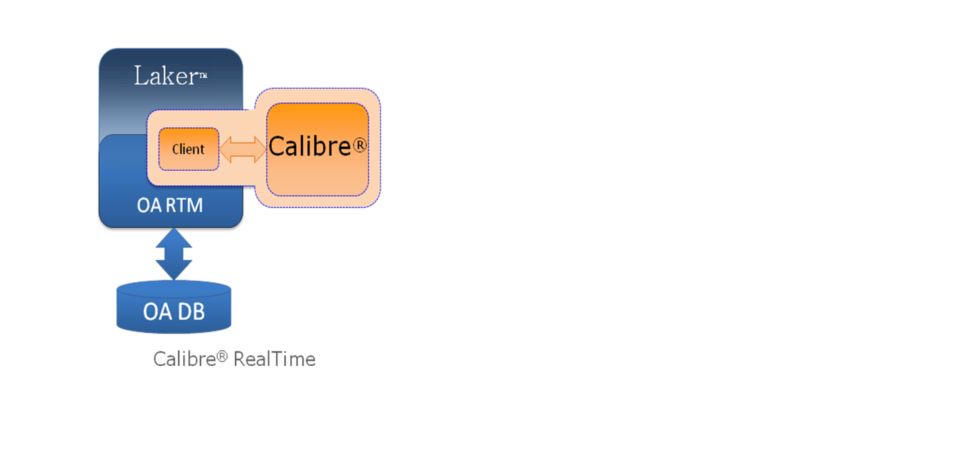

The Laker (from SpringSoft) and Calibre (from Mentor Graphics) Realtime Runtime Model enables two tools to run concurrently on the same data base to provide users with real time sign off quality feedback on edits and DRC fixing. The use model’s elimination of Stream In and Stream Out has a proven 10X productivity gain over older methodology. Brady tells me that this use model has proven value at advance nodes where edits and fixes can create numerous violations of complex conditional rules. It’s obvious that all of the time consuming cycles through Stream during edit-verify-fix iterations go away.

Overall flow in Laker system based on OpenAccess looks like this –

Profitability – Imagine the amount of resources being spent by every company in maintaining every format and its translation to other formats and databases. Also, increase in design cycles due to formats. If all that expense can be saved, it will definitely increase in the bottom line of the whole industry as such and the resources can be deployed at other top-end challenges. OpenAccess enables saving that unnecessary cost. It must be noted that Cadence invested significantly in the making of this database though.

Further Extensions – In one of my last article, I talked about design level analog constraints to be standardized. If OpenAccess database can be extended to include these constraints, then analog designs, even at the level of schematics and layouts can be shared with other parts of designs and tools from different vendors on the same database, thereby easing integration, edit and verification of the complete design together. Another good extension would be for 3D-IC to assimilate designs from various sources, specifically for information about TSVs, partitioning at different planes, power distribution networks and stress (thermal and mechanical) analysis; and tools for different usage working on the same database. For power distribution, CPF (Common Power Format) and UPF (Unified Power Format) formats are available, but they need to be unified in a single format first for greater interoperability.

By Pawan Kumar Fangaria

EDA/Semiconductor professional and Business consultant

Email:Pawan_fangaria@yahoo.com

Call for ERSA Sponsorship: International Gathering for Application Developers!

Commercial & Academic

July 16-19, 2012, Las Vegas, USA

ersaconf.org/ersa-news

Continue reading “Call for ERSA Sponsorship: International Gathering for Application Developers!”

Not me. Who owns IP quality?

Now that the dominant approach to building an SoC is to get IP from a number of sources and assemble it into a chip, the issue of IP quality is more and more critical. A chip won’t work if the IP doesn’t work, but it is quite difficult to verify this because the SoC design team is not intimately familiar with the IP blocks since nobody on the team designed them.

At DATE next week in Dresden there is a panel session on just this topic, moderated by Gary Smith. It takes place from 13:15 to 14:15 (or 1.15pm to 2.15pm for Americans) in the Exhibition Theater.

Participating on the panel are:

- Fahim Rahim, director of engineering at Atrenta in Grenoble

- Simon Butler, CEO of Methodics in San Francisco

- Gabriele Saucier, president of D&R in Grenoble

- Andreas Bruning, director of the technology office of ZDMI in Dresden

- Gerd Teepe, director of design enablement for GlobalFoundries in Dresden

While there are many tools available to help verify, debug, assemble and otherwise manipulate IP, there’s a distinct lack of a solid design data management system to address the specific needs of SoC designers. As a result, IP often suffers from a bad rap regarding quality. Users blame providers, and tool vendors and CAD managers are often caught in the middle, trying to put together solutions that track changes, use models and offer some degree of version control. Complicating matters is that the term “IP quality” has different meanings to different people – is it 1) the functional correctness of the IP – does it work they way it is supposed to (i.e. bug free); 2) or defined by its ability to do what is expected with respect to design parameters – power, timing, area, etc?

The panel will discuss what needs to be done to improve the design environment from the perspective of all the players

And if you are at DATE in Dresden, there is an interesting piece of “design re-use” that is worth a visit, the Frauenkirche, destroyed by bombing in 1945. The first time I went to Dresden was still a ruin, but it has been completely rebuilt. The original was built in 1726-43 and has been rebuilt using the original plans, many of the original stones (you can tell the old from the new because they are charred). In 2003 it was half built when I took the second photo. It reopened in 2005, 60 years after it collapsed. Wikipedia page here.

Atrenta/TSMC Soft-IP Alliance: 10 companies make the grade

Last May, Atrenta and TSMC announced the Soft-IP Alliance Program which uses SpyGlass and a subset of its GuideWare reference methodology to implement TSMC’s IP quality assessment program. TSMC requires all soft-IP providers to reach a minimum level of completeness before their IP is listed on TSMC online. Since TSMC is so dominant in the foundry business right now (Global struggling with process, Intel talking the talk but not yet really walking the walk, UMC…whatever happened to them anyway?) getting approved and listed with TSMC is extremely important.

Atrenta put everything needed to meet TSMC’s requirements in an IP Handoff Kit. Under the hood this uses SpyGlass’s RTL analysis suite to check for syntax and semantic correctness, simulation-synthesis mismatches, connectivity rules, clock domain crossings, test coverage, timing constraints and…lots more.

Suk Lee of TSMC (my successor at running IC marketing when we were both at Cadence) sees this as measurably improving IP quality. Of course TSMC isn’t directly responsible for IP quality but if IP fails and chips don’t go into production TSMC don’t make any money. Anyway, ten companies have now jumped through all the hoops and qualified their IP for inclusion in the TSMC 9000 IP library.

The companies in this initial program are a veritable who’s who of the IP world (with the notable exceptions of ARM and Synopsys). In alphabetical order so as not to offend anyone:

- Arteris (NoC)

- CEVA (DSP cores)

- Chips&Media (video IP)

- Digital Media Professionals (graphics IP)

- Imagination Technologies (GPU cores)

- Intrinsic-ID (security IP)

- MIPS Technologies (CPU cores)

- Sonics (NoC)

- Tensilica (reconfigurable processors and cores)

- Vivante (GPU cores)

Now that the dominant way to build an SoC is through assembling IP, the issue of IP quality is is a huge problem and a mixture of tools, methodologies, standards and certification is for sure the way to address it.

Verdi’s 3rd Symphony

The first version of the debug platform Verdi (then called Debussy) dates back to 1996 over 15 years ago. The second version was released in 2002. And now SpringSoft is releasing the 3rd version Verdi[SUP]3[/SUP]which is a completely new generation. A tool environment like Verdi seems to need to be completely refreshed about every 5 or 6 years to take account of the changes in the scale of design and the issues which have become important.

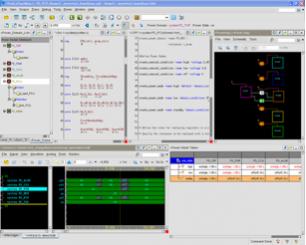

The motivation for creating Verdi[SUP]3[/SUP] is to fit in with how engineers on today’s design teams work. The debug tasks vary widely depending on the company’s methodology and flow, the attributes of the design, what needs to be done, and the engineer’s job-function, experience and style. There are three themes in the changes to the platform:

- new user-interface and personalization capabilities

- open platform for interoperability and customization

- new infrastructure for performance and capacity improvements

Personalization means tailoring the appearance and layout of the tool to the way that you want to work to accomplish the task at hand.

Customization is the capability to change or add to the functions available such as adding automation to perform a repetitive task, or integrating proprietary tools into the environment. Of course there is also functionality available from 3rd parties too, not to mention the VIA website announced last year. The extensibility is built on top of VIA (Verdi Interoperability Apps) making it easy to plug-in in-house tools, add items to menus, create hot-keys and so forth. For example, above the environment has been configured for power analysis.

The new user-interface is more modern and cleaner. Gone are the overlapping windows with information regularly buried out of sight. Instead the interface is now tiled so everything is within sight. Plus there are some big incremental capabilities such as being able to have multiple source-code windows open at the same time (the older version of Verdi only allowed one). A powerful search capability makes finding anything easy. Above is a comparison of the old user interface (on the left) and the new (on the right).

The basic infrastructure has been refreshed. A new parser lifts restrictions from the old one, is multi-threaded and generally faster. The FSDB (simulation data) has been compressed by 30% compared to the previous version, and also reads twice as fast on a typical 4-core configuration.

So, Verdi[SUP]3[/SUP] is a rebuild of Verdi from the ground up, with major developments in productivity, a much richer capability for customization and higher performance and capacity.

Verdi[SUP]3[/SUP] is in general beta which means all existing customers have access today and can go and download the beta release. Official first customer ship is in April.

If you are just interested in finding out more, the Verdi[SUP]3[/SUP] product page is here.

TSMC 28nm Yield Explained!

Yield, no topic is more important to the semiconductor ecosystem. After spending a significant part of my career on Design for Manufacturability (DFM) and Design for Yield (DFY), I’m seriously offended when semiconductor professionals make false and misleading statements that negatively affects the industry that supports us.

Continue reading “TSMC 28nm Yield Explained!”

The 2012 International Conference on ENGINEERING OF RECONFIGURABLE SYSTEMS AND ALGORITHMS – ERSA’12

July 16-19, 2012, Monte Carlo Resort, Las Vegas , Nevada , USA

ERSA-News: ERSA-NEWS ERSA’12 Website: http://ersaconf.org/ersa12

FPGAs connect users over TV whitespace

New embedded computing standards always take a while to get traction, and a burning question for innovators is what to do in the period between concept and acceptance. Sometimes, new ideas come when commercial silicon changes direction.

CEO Forecast Panel

This year’s CEO forecast panel was held at Silicon Valley Bank. Bankers live better than verification engineers, as if you didn’t know, based on the quality of the wine they were serving compared to DVCon.

This year the panelists were Ed Cheng from Gradient, Lip-Bu, Aart and Wally (and if you don’t know who they are you haven’t been paying attention) and Simon Segars of ARM (not their CEO, of course). Ed Sperling moderated. Somebody had managed to dig up the fact that at the start of his career he’d been a crime reporter, so that made sure that we only got truthful answers all evening!

The format was completely different from prior years. Instead of each CEO getting up and doing a presentation, instead various questions were discussed. The CEOs had been polled beforehand on their answers and then EDAC members (I’m not sure how many or who, it was a bit unclear) had also been polled. So one of the interesting things was to focus on areas where the CEOs differed from the man-in-the-street. And by ‘man in the street’ I mean design engineers. You’ll get some pretty odd looks even in silicon valley asking a random person in the street when stacking die will go mainstream.

Q1: when will stacking die become mainstream?CEOs were more conservative that their customers, with CEOs going for 2015-2016 and customers with 2013. Although as Ed Cheng pointed out, what does mainstream mean? There are stacked die in most cameras, in most DRAM assemblies and so on already. Everyone agreed that memory on processor, and 2.5D interposer would arrive long before true 3D (meaning punching TSVs through active area all over the die).

Q2: what is the most difficult challenge?CEOs went with integration, but their customers picked software, time-to-market, power and cost. Typing this now I’m not sure if the CEOs meant integration of their tools or integration of IP etc on chips. Certainly in the big EDA companies, integrating tools to work smoothly together now that they have to look forward and back up and down the tool chain is a major challenge.

Q3: what is the most prevalent concern for EDA customers?Everyone picked power, so at least the CEOs understand something about their customers. The CEOs also picked performance but their customers were more concerned with area/cost. Power is an issue right from the architectural level where people are looking for 5-10X reduction (hmm, that’s going to be tough) all the way down to the process where FinFETs are coming, soon if you are Intel, and in a couple of years if you are not.

Q4: what technology challenge will spur the next growth in EDA?Survey says…IP reuse and integration for the CEOs, and hardware/software co-design for the customers. Wally pointed out, as he has several times before, the suprising fact that all the growth in EDA, all of it, comes from new technologies. Something new comes along like place and route, grows fast and then plateaus for a decade while something new builds growth on that base.

Q5: what will EDA look like in 5 years?CEOs picked the same number of major companies but defined differently. Customers predict more startups and more consolidation. Wally noted that the top 3 EDA companies have made up 75% of EDA revenue for 40 years and that will continue although which 3 companies may change (I guess Wally can take pride in being the only member of the DMV, Daisy, Mentor, Valid that survived). Lip-Bu said that some VCs are starting to come back to EDA. I have to say that any companies I’ve been involved with have not seen any signs of this (my feeling is that EDA compaies don’t need enough money to be interesting to most funds, and the exits are too low).

Q6: where will the majority of leading edge designs be done in 5 years?EDA CEOs all picked North America, but about 30% customers picked China. Everyone agreed that on a 10 year timescale, things are a lot less certain. Aart said that a country (think China) goes through 3 stages: competing on cost, competing on competence and finally competing on creativity. China is moving from the cost to the competence era at the moment, I guess.

Q7: who will drive the industry in 5 years?Everyone said system/software companies, but the CEOs also had some love for fabless companies and the customers for foundries and IDMs. To some extent the answer is ‘all of the above’ of course but you can’t ignore system companies, Apple in particular, due to scale. Apple increased its revenue last year by roughly Intel’s revenue. It is an unequal battle.

Q8: will SoCs rely on more cores in a single processor or more processors in 5 years?Pretty much everyone reckoned both, more cores and more processors. The big challenge here is the dreaded dark silicon. We can put the cores on there but can we power them all up?

There were a few questions from the audience. Peggy Aycinena asked that, since this was a forecast panel, what was the forecast for 2012. All the CEOs had given guidance in recent conference calls and Synopsys and Mentor predicted 8.4% and Cadence 8.8%.