The press release about ONFI 3.0 support was launched by Cadence at the beginning of this year. It was a good illustration of Denali, then Cadence, long term commitment to Nand Flash Controller IP support. The ONFI 3 specification simplifies the design of high-performance computing platforms, such as solid state drives and enterprise storage solutions; and consumer devices, such as tablets and smartphones that integrate NAND Flash memory. The new specification defines speeds of up to 400 mega-transfers per second. In addition to the new ONFI 3 specification, the Cadence Flash and controller IP also support the Toggle 2.0 specification.

“NAND flash is very dramatically growing in the computing segment and is no longer just for storing songs, photos, and videos,” said Jim Handy, director at Objective Analysis. “The result is that the bulk of future NAND growth will consist of chips sporting high-speed interfaces. Cadence support of ONFI 3 and other high-speed interfaces is coming at the right time for designers of SSDs and other systems.”

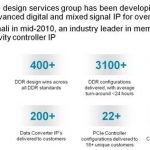

If you look at this IP segment size, and compare the design starts count with the DDRn Controller IP design starts, it was so far one order of magnitude less. Looking at the design wins made by Cadence on the IP market, you can see that the Denali products have generated 400+ design wins for DDRn memory controller when the Flash memory design wins are in the 50+ range. To make it clear, we are talking about the Flash based memory products used in:

- Data centers to support Cloud computing (high IOPS need)

- Mobile PC or Tablets to support “instant on” (SSD replacing HDD)

- NOT the eMMC and various flash cards

The latter market segment generates certainly a lot more IP sales, but at a fraction only of the cost of the IP license for a Flash controller managing NVM used in data center or SSD. Flash memory controller IP family from Cadence is targeting the high end of the market.

It’s also interesting to notice that Synopsys, covering most of the Interface protocols IP, including DDRn memory controller where the company is enjoying good market share as well as Cadence, is not supporting Flash memory controllers. You may argue that this market segment is pretty small, and why should Synopsys care about it? Simply because it could be the future of the storage market! If you look at storage, you probably think “SATA” and Hard Disk Drive (HDD)… All HDD shipped to be used inside a PC are SATA enabled, as well as the very few SSD integrated to replace HDD in the Ultra Notebook market. That’s right. But, as a matter of fact, SATA, as the standalone protocol to support storage, has reached a limit. A technology limit, as SATA 3.0, based on a 6 Gbps PHY, will be the latest SATA PHY.

We can guess that SATA, as a protocol stack, will survive, as some features like Native Command Queuing (NCQ) are unique to SATA and very efficient to optimize storage access (whether HDD or SSD). But the PHY part is expected to be PCI Express based in the future, the protocol name becoming “SATA Express”, at least for the PC (desktop, enterprise, mobile) or Media Tablet segments, where the use of one lane PCIe gen-3 will offer 1 GB/s bandwidth, to be compared with 0.48 GB/s with SATA 3.0.

Still in the storage area, but Flash based, the current solution for high I/O Per Second (IOPS) application, is based on the use of a Nand Flash Memory Controller integrated with an Interface protocol which could be SATA 3.0, USB 3.0 or PCI Express (in theory) but which is in practice based on PCIe, like for example a x4 PCIe gen-2 offering 20Gb/s raw bandwidth, or 2GB/S effective.

Here, the emerging standard will be named NVM Express and will confirm afterwards the solution currently used, and probably define a roadmap to support higher bandwidth needs associated with cloud computing development.

Using Nand Flash devices has a cost: accessing a specific memory point will end up degrading the device (at that specific point), especially for Multi Level Flash (MLC). This effect being amplified when you use Flash devices manufactured in smaller technology nodes, and getting worse as far as you are using higher capacity devices, as they are built on the smallest nodes. In other words, the more you use a SSD, the more important is the risk to generate an error. Cadence implements sophisticated, highly configurable error correction techniques to further enhance performance and deliver enterprise class reliability. Delivering advanced configurability, low-power capabilities and support for system boot from NAND, the Cadence solution is scalable from mobile applications to the data center. The IP is backward-compatible with existing ONFI and Toggle standards. The existing Cadence IP offering supports the ONFI 1, ONFI 2, Toggle 1 and Toggle 2 specifications, and also provides asynchronous device support. Cadence also offers supporting verification IP (VIP) and memory models to ensure successful implementation.

The move from SATA based storage, to HDD, SATA Express compliant, or SSD, NVM Express compliant, will certainly change the storage landscape, as well as the IP vendors positioning. Synopsys is well positioned on SATA IP and PCI Express IP segment, when Cadence is not supporting SATA IP, but supports Nand Flash and PCI Express Controller IP. With the emerging of “SATA Express” and “NVM Express”, it will be a new deal for IP vendors, interesting to monitor!

By Eric Esteve from IPNEST