The dominance of GPUs in AI workloads has long been driven by their ability to handle massive parallelism, but this advantage comes at the cost of high-power consumption and architectural rigidity. A new approach, leveraging a chiplet-based RISC-V vector processor, offers an alternative that balances performance, efficiency, and flexibility, steering towards heterogeneous computing to align with AI/ML-driven workloads. By rethinking vector computation and memory bandwidth management, a scalable AI accelerator could rival NVIDIA’s GPUs in both cloud and edge computing.

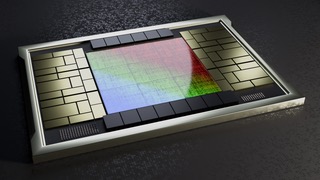

A modular chiplet architecture is essential for achieving scalability and efficiency. Instead of a monolithic GPU design, a system composed of specialized chiplets can optimize different aspects of AI computation. A vector processing chiplet, built on the RISC-V vector extension, serves as the primary computational unit, dynamically adjusting vector length to fit workloads of varying complexity. A matrix multiplication accelerator complements this unit, handling the computationally intensive operations found in neural networks.

Like matrix multiplication accelerators, tensor cores, cryptography, and AI/ML accelerators enhance efficiency and performance. To address the memory bottlenecks that often slow down AI inference and training, high-bandwidth on-package memory chiplets integrate closely with the compute units, reducing latency and improving data flow. Managing these interactions, a scalar processor chiplet oversees execution scheduling, memory allocation, and communication across the entire system.

One of the fundamental challenges in AI acceleration is mitigating instruction stalls caused by memory latency. Traditional GPUs rely on speculative execution and complex replay mechanisms to handle these delays, but a chiplet-based RISC-V vector processor could take a different approach by implementing time-based execution scheduling. Instructions are pre-scheduled into execution slots, eliminating the need for register renaming and reducing overhead. By intelligently pausing execution during stalled loads, an advanced execution time freezing mechanism redefines RISC-V vector processing, ensuring peak performance and power efficiency. This architecture eliminates inefficiencies and unlocks the full potential of vector computing, keeping vector units fully utilized. This ‘Fire and Forget’ time-based execution scheduling enables parallelism, low power, and minimal overhead while maximizing resource utilization and hiding memory latencies.

Chiplet communication plays a pivotal role in determining overall system performance. Unlike monolithic GPUs that rely on internal bus architectures, a chiplet-based AI accelerator needs a high-speed interconnect to maintain seamless data transfer. The adoption of UCIe (Universal Chiplet Interconnect Express) could provide an efficient die-to-die communication framework, reducing latency between compute and memory units. An optimized network-on-chip (NoC) further ensures that vector instructions and matrix operations flow efficiently between chiplets, preventing bottlenecks in high-throughput AI workloads.

Competing with NVIDIA’s ecosystem requires more than just hardware innovation. Higher vector unit utilization keeps the vector pipeline fully active, maximizing throughput and eliminating idle cycles. Fewer stalls and pipeline flushes prevent execution misalignment, ensuring smooth and efficient instruction flow. Superior power efficiency reduces unnecessary power consumption by pausing execution only when needed, and optimized instruction scheduling aligns vector execution precisely with data availability, boosting overall performance.

Software plays an equally important role in adoption and usability. A robust compiler stack optimized for RISC-V vector and matrix extensions ensures that AI models can take full advantage of the hardware. Custom libraries tailored for deep learning frameworks such as PyTorch and TensorFlow bridge the gap between application developers and hardware acceleration. A transpilation layer such as CuPBoP (CUDA for Parallelized and Broad-range Processors) enables seamless migration from existing GPU-centric AI infrastructure, lowering the barrier to adoption.

CuPBoP presents a compelling pathway for enabling CUDA workloads on non-NVIDIA architectures. By supporting multiple Instruction Set Architectures (ISAs), including RISC-V, CuPBoP enhances cross-platform flexibility, allowing AI developers to execute CUDA programs without the need for intermediate portable programming languages. Its high CUDA feature coverage makes it a robust alternative to existing transpilation frameworks, ensuring greater compatibility with CUDA-optimized AI workloads. By leveraging CuPBoP, RISC-V developers could bridge the gap between CUDA-native applications and high-performance RISC-V architectures, offering an efficient, open-source alternative to proprietary GPU solutions.

Energy efficiency is another area where a chiplet-based RISC-V accelerator can differentiate itself from power-hungry GPUs. Fine-grained power gating allows inactive compute units to be dynamically powered down, reducing overall energy consumption. Near-memory computing further enhances efficiency by placing computation as close as possible to data storage, minimizing costly data movement. Optimized vector register extensions ensure that AI workloads make the most efficient use of available compute resources, further improving performance-per-watt compared to traditional GPU designs.

Interestingly, while the idea of a RISC-V chiplet-based AI accelerator remains largely unexplored in public discourse, there are signals that the industry is moving in this direction. Companies such as Meta, Google, Intel, and Apple have all made significant investments in RISC-V technology, particularly in AI inference and vector computing. However, most known RISC-V AI solutions, such as those from SiFive, Andes Technology, and Tenstorrent, still rely on monolithic SoCs or multi-core architectures, rather than a truly scalable, chiplet-based approach.

A recent pitch deck from Simplex Micro suggests that a time-based execution model and modular vector processing architecture could dramatically improve AI processing efficiency, particularly in high-performance AI inference workloads. While details on commercial implementations remain sparse, the underlying patent portfolio and architectural insights indicate that the concept is technically feasible. (see table)

| Patent # | Patent Title | Granted |

| US-11829762-B2 | Time-Resource Matrix for a Microprocessor | 11/28/2023 |

| US-12001848-B2 | Phantom Registers for a Time-Based CPU | 11/12/2024 |

| US-11954491-B2 | Multi-Threaded Microprocessor with Time-Based Scheduling | 4/9/2024 |

| US-12147812-B2 | Out-of-Order Execution for Loop Instructions | 11/19/2024 |

| US-12124849-B2 | Non-Cacheable Memory Load Prediction | 10/22/2024 |

| US-12169716-B2 | Time-Based Scheduling for Extended Instructions | 12/17/2024 |

| US-11829767-B2 | Time-Aware Register Scoreboard | 11/28/2023 |

| US-11829762-B2 | Statically Dispatched Time-Based Execution | 11/28/2023 |

| US-12190116-B2 | Optimized Instruction Replay System | 1/7/2025 |

The strategic positioning of such an AI accelerator depends on the target market. Data centers seeking alternatives to proprietary GPU architectures would benefit from a flexible, high-performance RISC-V-based AI solution. Edge AI applications, such as augmented reality, autonomous systems, and industrial IoT, could leverage the power efficiency of a modular vector processor to run AI workloads locally without relying on cloud-based inference. By offering a scalable, customizable solution that adapts to the needs of different AI applications, a chiplet-based RISC-V vector accelerator has the potential to challenge NVIDIA’s dominance.

As AI workloads continue to evolve, the limitations of traditional monolithic architectures become more apparent. A chiplet-based RISC-V vector processor is more adaptable to customization, modular, scalable, high-performance, power-efficient, and cost-effective—ideal for AI, ML, and HPC within an open-source ecosystem. A chiplet-based RISC-V vector processor represents a shift toward a more adaptable, energy-efficient, and open-source approach to AI acceleration. By integrating time-based execution, high-bandwidth interconnects, and workload-specific optimizations, this emerging architecture could pave the way for the next generation of AI hardware, redefining the balance between performance, power, and scalability.

Also Read:

Webinar: Unlocking Next-Generation Performance for CNNs on RISC-V CPUs

An Open-Source Approach to Developing a RISC-V Chip with XiangShan and Mulan PSL v2

2025 Outlook with Volker Politz of Semidynamics

Share this post via:

Comments

There are no comments yet.

You must register or log in to view/post comments.