FPGA prototyping and hardware emulation originated from two independent demands that emerged at roughly the same time, namely, the necessity to implement digital designs in reconfigurable hardware. This was conceivable given the newly introduced field programmable gate array (FPGA) device.

Yet from the very beginning they were driven by different motivations.

Hardware emulation emerged from the need to tame complexity. As integrated circuits grew larger, engineers could no longer rely on simulation alone to validate designs with millions of transistors. Emulation promised to overcome the simulation limitations, still allowing for controlled execution and powerful debugging.

FPGA prototyping, by contrast, grew out of the drive for speed and real-world execution. Its purpose was not primarily to debug signals deep inside the design, but to run the design fast enough to enable meaningful software development, system validation, and application workloads, long before silicon existed.

For decades, these two approaches lived in largely separate universes, shaped not only by distinct technical goals, but also by different engineering mindsets and even cultural identities. Emulation’s mindset sits on the engineer desk, a world of computer-driven waveform analysis and controlled verification workflows. Prototyping, by contrast, belongs to the lab bench, defined by the sensory reality of cables, hardware peripherals, and real-time execution.

The boundary between them has always been somewhat nebulous and poorly defined. Over time, however, market forces, customer demands, and the growing dominance of software-defined systems steadily pushed these paths closer together. Today, emulation and prototyping are no longer isolated disciplines. They coexist under a shared hardware-assisted verification (HAV) umbrella, increasingly complementary in workflows that span functional correctness, performance analysis, power validation, and full software-stack system bring-up.

Understanding how these two worlds rose, diverged, and ultimately began to converge is essential to understanding where verification is headed next.

The Origins of Prototyping: Breadboarding with Wooden Boards

My earliest memories of hardware design verification take me back more than four decades, to an internship I completed at SIT-Siemens in Milan, Italy. At the time, I designed and built a digital clock using discrete TTL logic, a project that became the subject of my doctorate thesis and the culmination of my electronic engineering degree.

Looking back, what is striking is how radically different the landscape was. In those years, design verification was not an industry buzzword. In fact, there was no verification industry at all. There were no standardized methodologies, no dedicated verification engineers, and certainly no sophisticated platforms for pre-silicon validation. Hardware was tested the only way engineers knew how: by physically building it.

The dominant practice was breadboarding—literally constructing prototypes on wooden boards. Nails served as connection nodes. Wires were routed, wrapped, soldered, and adjusted by hand. The process required equal creativity, patience, and perseverance. Later, wooden boards would be replaced by wire-wrap boards, allowing for higher density and more complex routing, still all manual.

Eventually designers would use printed circuit boards populated with sockets and discrete components. This was progress, but it was hardly “rapid.” Each iteration often required new boards, careful assembly, constant troubleshooting, and the reality that debugging meant chasing elusive errors with physical instrumentation rather than inspecting signals with software tools. Prototyping was essential, but it was slow, costly, and highly errorprone. And a fundamental ambiguity always lingered: was the failure caused by the design itself or by the prototype implementation?

Field-Programmable Gate Arrays (FPGAs) to the Rescue

Everything changed with the arrival of the field-programmable gate array.

FPGAs introduced a novel idea: instead of rebuilding hardware every time the design evolved, engineers could map the design-under-test (DUT) directly into programmable silicon. With that shift, testing moved from a dirty-hands discipline—wooden boards, nails, solder, and hammers—to something far closer to a white-glove workflow.

Prototyping became cleaner, faster, and dramatically more scalable.

In many ways, FPGA prototyping was born directly from the hardware designer’s bench. It remained a hands-on, roll-up-your-sleeves craft, but now empowered by re-programmability and speed. Engineers could run real workloads, connect real peripherals, and observe system behavior at near real-time performance.

For the first time, validation could extend beyond synthetic test vectors. Systems could boot operating systems. Software teams could begin development before tape-out. Hardware could be exercised not only for correctness, but for integration realism.

This was the beginning of a new era: one in which verification was no longer confined to signal-level inspection but expanded toward system-level execution.

FPGA prototyping became the bridge between the abstract world of RTL and the concrete world of deployed electronic systems.

Emulation’s Parallel Rise: Debugging Designs Too Big to Simulate

While FPGA prototyping was taking shape on the engineer’s bench as a practical path toward speed, system validation, and early software execution, a different pressure was building elsewhere in the industry.

Designs were exploding in size.

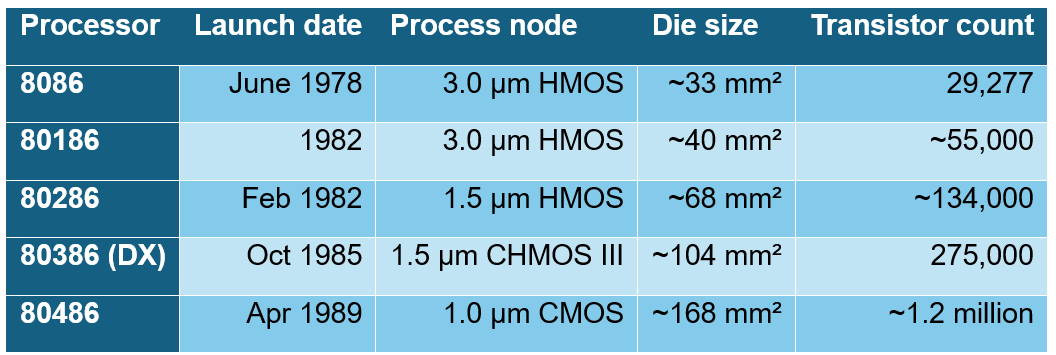

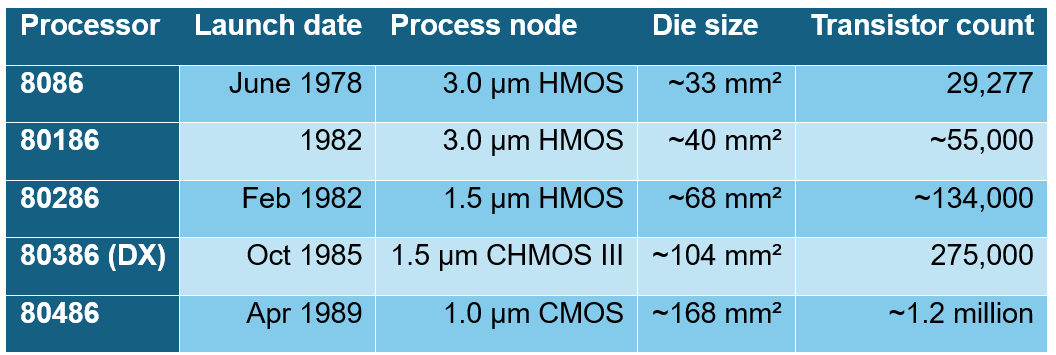

Between the mid-1980s and early 1990s, the semiconductor landscape underwent a structural disruption. Driven by the relentless shrinking of transistor size and dropping cost, integrated circuits (IC) exploded from thousands of transistors to millions. Logic was no longer a series of isolated blocks; it was the entire system, condensed onto a single sliver of silicon.”

Simulation, which had been the workhorse of verification, began to buckle under the weight of this complexity. Not just gate-level simulators, even hardware-description-language (HDL) simulators introduced in the 1990s were becoming increasingly slow. Despite the remarkable progress in compute power, the gap between design size and simulation throughput widened year after year. Furthermore, as designs grew in size, the number of test cycles required to validate them expanded exponentially, stretching the verification schedule beyond tape-out. Comprehensive fault analysis became increasingly out of reach.

It was in this context that hardware emulation emerged—not from the desire to run software fast, but from the need to verify hardware deeply, systematically, and with control. Emulation was born as an answer to complexity.

Two Different Philosophies: Visibility Over Speed

From the beginning, FPGA prototyping and emulation embodied two fundamentally different philosophies. Where prototyping prioritized execution speed and system realism, emulation prioritized control, observability, and debugging.

FPGA prototypes could run rather fast, often at tens or even hundreds of megahertz, but they were notoriously opaque. Once a design was mapped into a sea of programmable logic, internal signals became difficult to access, waveform visibility was limited to compiled internal probes, and debugging required painful recompilation of new or additional sets of probes. Prototyping excelled at-speed execution and early system bring-up, but not necessarily at uncovering the root cause of elusive failures buried deep inside the design.

Hardware emulation, by contrast was conceived as a verification instrument: a specialized platform designed to execute hardware models orders of magnitude faster than simulation, while still preserving the introspection, controllability, and determinism mandatory to carry out debugging quickly and reliably. They were built from the ground up to support the verification workflow. They provided deep signal visibility, full-state capture and replay, non-intrusive tracing, controlled execution with breakpoints, and rapid debug iteration. Their primary purpose was to find and fix bugs, whether deep within complex RTL or at the hardware/software interface, making emulation the natural domain of verification engineers rather than system integrators.

These distinct philosophies were also reflected in operating modes and deployment.

Early emulators often ran in in-circuit emulation (ICE) mode, cabled directly to target systems and driven by real external stimulus. Over time, emulation evolved toward increasingly virtualized environments, integrated with software testbenches, transaction-level models, and hybrid simulation flows. Typical emulation speeds remained in the low single-digit megahertz range—fast enough to escape simulation’s limits but still optimized for observability rather than throughput.

FPGA prototyping, on the other hand, prioritized real-time performance and physical connectivity. It was most effective for designs and subsystems that could fit within a few single-digit FPGAs, enabling extensive software development and interoperability testing long before silicon availability. Prototypes were designed to operate directly with real-world interfaces such as PCIe, USB, Ethernet, and DDR memory, delivering near-system-speed execution.

Organizationally, emulation became a centralized, shared resource: a large platform managed by specialists and accessed by distributed teams of verification and software engineers worldwide for deep-dive debugging and pre-silicon software bring-up. Prototyping, conversely, often lived on the hardware designer’s bench as a hands-on, roll-up-your-sleeves discipline optimized for speed, connectivity, and early system validation.

Separate Markets and Vendor Ecosystems

For many years, these two worlds were served by different vendor ecosystems.

The emulation market was dominated by the large EDA companies, whose platforms were tightly integrated into enterprise verification flows. FPGA prototyping, meanwhile, thrived in a vibrant “cottage industry” of smaller, specialized firms. These companies provided high-performance FPGA boards, mostly used industry standard FPGA compilers, and practical tools aimed squarely at hardware engineers and system developers.

The separation was not only technical, but it was also commercial, cultural, and organizational.

The Commercial Convergence of Emulation and Prototyping

Around 2010, a significant market convergence began to take place. Recognizing the strategic importance of owning the entire HAV continuum, major EDA vendors began acquiring the smaller prototyping players. As a result, the “cottage industry” largely disappeared, and today prototyping solutions are offered primarily by the same three major EDA vendors that dominate emulation.

This consolidation fueled a long-held industry ambition: a single unified hardware platform that could serve both purposes. An ideal “one machine that does both” that could be configured as a high-visibility emulator or a high-performance prototype “with the push of a button.”

Vendors have made real progress toward this vision, with offerings such as Synopsys’s EP-Ready (Emulation and Prototyping) systems, originally announced in 2025. In the spring of 2026 Synopsys then doubled down on this strategy by making all of their hardware systems now ‘Software-defined’.

Even unified platforms still require significant manual effort to transition between emulation-style instrumentation and prototyping-style performance. This highlights a crucial truth: the convergence has been primarily economic and commercial, not yet fully technical.

The fundamental engineering tension persists. The deep, intrusive instrumentation required for effective emulation is often at odds with the non-intrusive, high-speed, real-world operation demanded by prototyping. The “push-button” dream remains elusive because it requires solving this core conflict through advanced automation, smarter compilers, and more seamless workflow integration, challenges the industry continues to confront.

Conclusion: From Wooden Boards to Full-Stack Verification

The journey from wooden breadboards to white-glove verification platforms is more than a technological progression. It reflects how the semiconductor industry itself has evolved, from manual construction to programmable prototyping, through industrial-scale emulation, and now into full-stack system validation.

FPGA prototyping and emulation began as two separate worlds, shaped by different user goals, cultures, and constraints. Over time, driven by the unstoppable rise of software-defined systems and the unprecedented demands of AI-era computing, HAV has outgrown its original purpose.

Verification teams will continue to push the envelope across all software-driven use cases. What was once an elusive goal of performing them on a common HAV platform is steadily becoming reality, one software release at a time. If you are a user of these new software-defined platforms, I am curious how are they working out for you?

Also Read:

An AI-Native Architecture That Eliminates GPU Inefficiencies

Hardware is the Center of the Universe (Again)

VSORA Board Chair Sandra Rivera on Solutions for AI Inference and LLM Processing

Share this post via:

Comments

There are no comments yet.

You must register or log in to view/post comments.