As semiconductor manufacturing pushes deeper into the nanometer regime, computational lithography has evolved from a supporting step into a central pillar of advanced chip design. Mask synthesis, lithography simulation, and optical proximity correction (OPC) now demand unprecedented levels of accuracy and computational throughput. At the heart of these workflows lies rasterization, which is the process of converting complex geometric layouts into ultra–high-resolution pixel grids.

Rasterization – Polygon to pixel-based representation

![]()

Siemens EDA recently published a whitepaper presenting an innovative approach to addressing this topic. The whitepaper explores why rasterization has become a bottleneck and how an innovative rasterization algorithm using massively parallel GPU configuration addresses the challenges. Real-world performance results presented in the whitepaper reveal the innovative technique’s impact on next-generation semiconductor manufacturing.

Why Rasterization Matters More Than Ever in Lithography

Rasterization is often associated with computer graphics, but in electronic design automation (EDA), its role is far more consequential. In computational lithography, rasterized layouts are used to simulate how light propagates through masks and how photoresist responds at nanometer scales. Unlike graphics applications, where a pixel can be treated as simply on or off, lithography requires precise fractional pixel coverage and strict preservation of connectivity between extremely fine features. A tiny error introduced during rasterization can propagate through simulation and OPC loops, ultimately affecting yield and manufacturability.

As technology nodes shrink below a few nanometers, the resolution required for rasterization skyrockets, and the same operation must be repeated many times during iterative OPC flows. Even highly optimized CPU-based rasterizers struggle to keep up, turning rasterization into a dominant runtime bottleneck.

The Limits of Traditional Rasterization Approaches

Most traditional rasterization techniques rely on binary coverage models that work well for visualization but break down in lithography contexts. These approaches fail to capture subtle intensity variations and often introduce connectivity artifacts when dealing with thin lines or closely spaced features. At the same time, the sheer scale of modern layouts with billions of polygons and trillions of pixel evaluations places enormous pressure on memory bandwidth and compute resources.

This is where GPUs become attractive. Their massive parallelism is well suited to data-intensive workloads, but GPUs also present challenges, including irregular memory access patterns and sensitivity to numerical precision. Successfully using GPUs for lithography rasterization requires algorithms designed specifically for accuracy-first, massively parallel execution.

Rethinking Rasterization for GPUs

A GPU-optimized rasterizer for computational lithography starts with a fundamentally different mindset. Instead of sequentially processing polygons, the layout is spatially decomposed into independent regions that can be rasterized in parallel. Each region is mapped to GPU thread blocks, allowing thousands of threads to evaluate pixel coverage simultaneously.

Example of pixel classification and processing

![]()

Fractional pixel coverage is computed using floating-point arithmetic, not approximations, ensuring that boundary interactions are handled with nanometer-scale precision. Special care is taken to preserve sub-pixel connectivity so that thin features are not inadvertently broken during rasterization. Manhattan geometries benefit from simplified evaluation paths, while curvilinear shapes are handled using more general, yet still parallel-friendly, methods.

How the GPU Rasterization Pipeline Works

The rasterization pipeline begins with CPU-side preprocessing, where layout data is parsed and binned into spatial tiles. These tiles are transferred to the GPU in memory layouts optimized for coalesced access. On the GPU, each tile is processed independently: geometry is cached in shared memory, threads are assigned to pixels or small pixel groups, and each thread computes whether its pixel lies inside a polygon, outside it, or on its boundary.

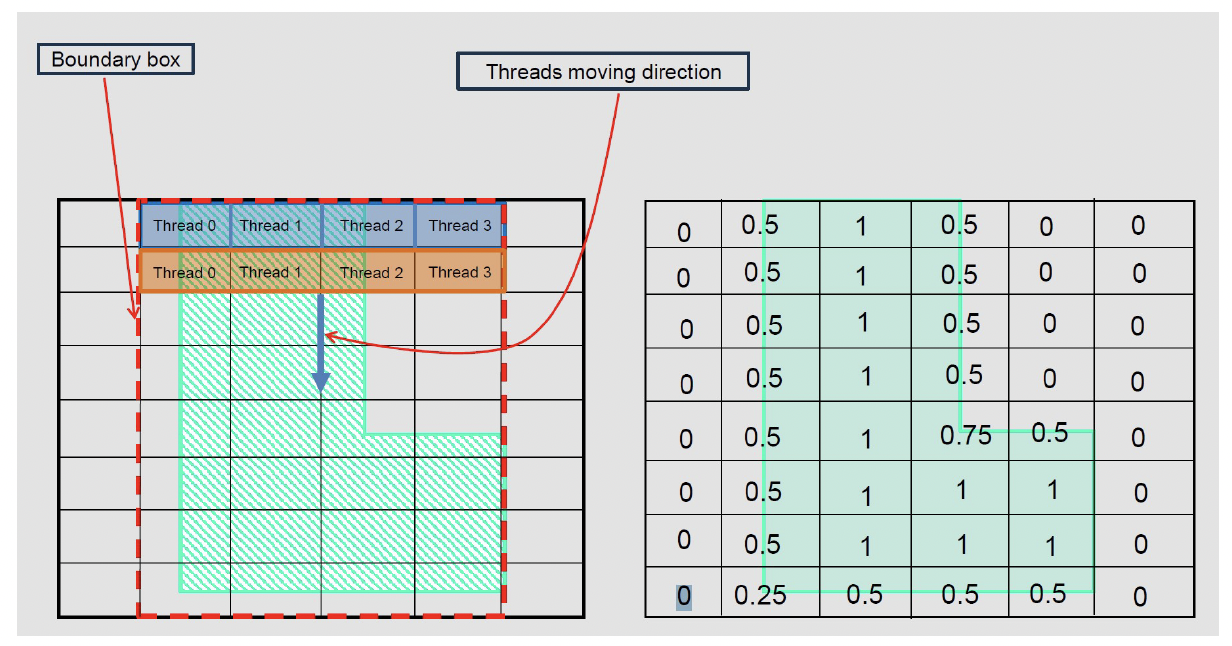

Rasterization of L-shape using block of threads

Boundary pixels receive special treatment. Polygon edges intersecting a pixel are analytically evaluated, and the fractional area covered is computed precisely. Atomic operations ensure correct accumulation when multiple polygons affect the same pixel. This design achieves both high performance and deterministic accuracy, two properties that are rarely achieved together in large-scale parallel systems.

The implementation leverages the CUDA programming model and is particularly effective on modern data-center GPUs from NVIDIA, which provide the memory bandwidth and concurrency required for extreme-resolution rasterization.

Real-World Performance Results Using Nvidia H100 GPUs

Performance benchmarking paints a compelling picture. When compared with highly optimized CPU-based rasterizers, the GPU approach delivers dramatic speedups across a range of layouts. For designs dominated by Manhattan geometries, speedups of up to 290× have been observed. Even for more challenging curvilinear layouts, the GPU rasterizer achieves speedups of up to 45×.

Crucially, these gains do not come at the expense of accuracy. Across all test cases, absolute error remains below one percent relative to reference CPU calculations. This level of precision meets the stringent requirements of computational lithography and confirms that massive parallelism can coexist with nanometer-scale accuracy.

Why This Matters for EDA and Manufacturing

The implications of GPU-accelerated rasterization extend far beyond raw performance metrics. Faster rasterization shortens OPC and mask synthesis cycles, enabling more iterations within the same design window. This leads to better correction quality, improved yield, and reduced time to market. High accuracy and connectivity preservation ensure that these gains do not introduce new risks into manufacturing flows.

As designs increasingly incorporate complex, non-Manhattan geometries and as simulation fidelity continues to rise, the scalability of GPU-based rasterization becomes even more valuable. What was once a bottleneck becomes a scalable, future-proof component of the lithography pipeline.

Summary

Massively parallel GPU rasterization represents a significant shift in how computational lithography workloads are approached. As GPU architectures continue to evolve, offering more cores and higher memory bandwidth, the performance advantages of this approach are likely to grow. Future work will focus on deeper integration with existing EDA platforms, support for heterogeneous CPU–GPU workflows, and extensions to more advanced lithography models and three-dimensional effects.

You can download the entire whitepaper from here.

Also Read:

Formal Verification Best Practices

AI-Driven Automation in Semiconductor Design: The Fuse EDA AI Agent

Siemens Reveals Agentic Questa

Share this post via:

Comments

There are no comments yet.

You must register or log in to view/post comments.