At the recent TSMC OIP symposium, Bill Acito from Cadence and Chin-her Chien from TSMC provided an insightful presentation on their recent collaboration, to support TSMC’s Integrated FanOut (InFO) packaging solution. The chip and package implementation environments remain quite separate. The issues uncovered in bridging that gap were subtle – the approaches that Cadence described to tackle these issues are another example of the productive alliance between TSMC and their EDA partners.

WLCSP Background

Wafer-level chip-scale packaging was introduced in the late 1990’s, and has evolved to provide an extremely high-volume, low-cost solution.

Wafer fabrication processing is used to add solder bumps to the die top surface at a pitch compatible with direct printed circuit board assembly – no additional substrate or interposer is used. A top-level thick metal redistribution layer is used to connect from pads at the die periphery to bump locations. The common terminology for this pattern is a “fan-in design”, as the RDL connections are directed internally from pads to the bump array.

[Ref: “WLCSP”, Freescale Application Note AN3846]

WLCSP surface-mount assembly is now a well-established technology – yet, the fragility of the tested-good silicon die during the subsequent dicing, (wafer-level or tape reel) pick, place, and PCB assembly steps remains a concern.

To protect the die, a backside epoxy can be applied prior to dicing. To further enhance post-assembly attach strength and reliability, an underfill resin with an appropriate coefficient of thermal expansion is injected.

A unique process can be used to provide further protection of the backside and also the four sides of the die prior to assembly – an “encapsulated” WLCSP. This process involves separating and re-placing the die on a (300mm) wafer, which will be used as a temporary carrier.

The development of this encapsulation process has also enabled a new WLCSP offering, namely a “fan-out” pad-to-bump topology.

Chip technology scaling has enabled tighter pad pitch and higher I/O counts, which necessitate a “fan-out” design style to match the less aggressively-scaled PCB pad technology. TSMC’s new InFO design enables a greater diversity of bump patterns. Indeed, it offers comparable flexibility as conventional (non-WLCSP) packaging.

Briefly, the fan-out technology starts by adding an adhesive layer to the wafer carrier. Die are (extremely accurately!) placed on this layer at a precise separation, face-down to protect the active top die surface. A molding compound is applied across the die backsides, then cured. The adhesive layer and original wafer are de-attached, resulting in a “reconstituted” wafer of fully-encapsulated die embedded in the compound:

(Source: TSMC. Molding between die highlighted in blue. WLCSP fan-out wiring to bumps extends outside the die area.)

This new structure can then be subjected to “conventional” wafer fabrication steps to complete the package:

- addition of dielectric and metal layer(s) to the die top surface

- patterning of metals and (through-molding) vias

- addition of Under Bump Metal, or UBM (optional, if the final RDL layer can be used instead)

- final dielectric and bump patterning

- dicing, with molding in place on all four sides and back

- back-grind to thin the final package

As illustrated in the figure, multi-chip and multi-layer wiring options are supported.

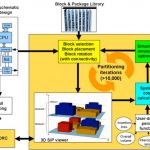

InFO design and verification Cadence tool flow

Cadence described the tool enhancements developed to support the InFO package. The key issue(s) arose from the “chip-like” reconstituted wafer process fabrication.

For InFO physical design, TSMC provides design rules in the familiar verification infrastructure for chip design, using a tool such as Cadence’s PVS. As an example, there are metal fill and metal density requirements associated with the fan-out metal layer(s) that are akin to existing chip design rules, a natural for PVS. (After the final package is backside-thinned, warpage is a major concern, requiring close attention to metal densities.)

Yet, InFO design is undertaken by package designers familiar with tools such as Cadence’s Allegro Package Designer or SiP Layout, not Virtuoso. As a result, the typical data representation associated with package design (Gerber 274X) needs to be replaced with GDS-II.

Continuous arcs/circles and any-angle routing need to be “vectorized” in GDS-II streamout from the packaging tools, in such a manner to be acceptable to the DRC runset – e.g., “no tiny DRC’s after vectorization”.

Viewing of PVS-generated DRC errors needs to be integrated into the package design tool environment.

Additionally, algorithms are needed to perforate wide metal into meshes. Routing algorithms for InFO were enhanced. Fan-out bump placements (“ballout”) should be optimized during co-design, both for density and to minimize the number of RDL layers required from the chip pinout.

For electrical analysis of the final design, integration of the InFO data with extraction, signal integrity, and power integrity tools (such as Cadence’s Sigrity) is required.

Cadence will be releasing an InFO design kit in partnership with TSMC, integrated with their APD and SiP products, to enable package designers to work seamlessly with (“chip design-like”) InFO WLCSP data. The bridging of these two traditionally separate domains is pretty exciting stuff.

-chipguy