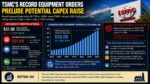

TSMC’s latest Board of Directors capital appropriation announcement may appear mixed on the surface, but a closer look reveals one important conclusion: The company is quietly setting the stage for another potential upward revision to its already aggressive 2026 capital expenditure outlook. The headline figure of $31.3B in newly approved capital appropriations was below the massive $45.0B approved in the prior quarter, yet the composition of this spending tells a much more constructive story for the semiconductor equipment ecosystem.

The most notable development is the continued acceleration in Advanced Node equipment investment. TSMC approved approximately $21.0B of Advanced Node-related equipment spending this quarter, representing the highest quarterly authorization level since we began tracking the company’s BoD capital approvals in 4Q19. Even though total approved spending declined sequentially, the shift toward leading-edge wafer fabrication equipment indicates that TSMC’s strategic focus remains firmly centered on expanding advanced logic capacity.

This distinction matters. Infrastructure spending and specialty technology investments can fluctuate depending on timing, construction schedules, or packaging initiatives. Advanced Node equipment approvals, however, are a far cleaner signal of future semiconductor manufacturing activity. They directly correlate with purchases of lithography, process control, deposition, etch, and metrology systems required for ramping leading-edge nodes such as N2 and A16.

At the same time, there was a notable absence of new approvals for Specialty Devices and Advanced Packaging. Last quarter’s record approval in this category was later understood to be tied to silicon photonics, CoWoS, and SoIC-related investments. The lack of follow-on approvals this quarter should not necessarily be interpreted as weakening demand. Rather, it likely reflects the exceptionally large allocation already approved previously. Given the long lead times and substantial scale of advanced packaging infrastructure deployment, TSMC may simply be digesting prior commitments before authorizing another major tranche of spending.

Infrastructure spending also normalized this quarter. The $10.3B approval level was meaningfully lower than the record $21.4B authorized in the prior quarter. However, this moderation appears more cyclical than structural. Infrastructure allocations often fluctuate depending on the timing of fab shell construction, utility expansion, overseas manufacturing projects, and regional government incentives. The key takeaway is that infrastructure moderation did not come alongside any slowdown in Advanced Node investment intensity.

Perhaps the most important data point from this quarter is the emerging disconnect between approved future spending and TSMC’s current annual CapEx guidance. Assuming BoD capital appropriations generally represent roughly the next 12 months of spending activity, the trailing twelve-month Advanced Node equipment authorization level has now climbed to approximately $55.0B. That figure alone nearly matches TSMC’s entire current 2026 capital expenditure guidance of roughly $56B.

This creates an increasingly difficult mathematical setup. If Advanced Node equipment alone already represents nearly the full-year CapEx plan, then either spending cadence must slow materially in coming quarters or total CapEx guidance will need to move higher. Given current AI infrastructure demand trends, slowing investment appears unlikely.

The broader industry backdrop strongly supports the latter scenario. AI-driven compute demand continues to accelerate across hyperscale data centers, sovereign AI projects, enterprise deployments, and edge inference applications. Leading-edge silicon demand remains supply constrained, particularly for advanced GPUs, AI accelerators, networking ASICs, and high-bandwidth memory integration. TSMC remains the dominant manufacturing partner for virtually all major AI chip developers, placing extraordinary pressure on its advanced manufacturing capacity roadmap.

As a result, TSMC’s quarterly CapEx run rate likely needs to increase further over the next twelve months. The company’s N2 ramp, advanced packaging expansion, overseas fab deployment, and ongoing EUV intensity growth all point toward sustained elevated investment levels. This is why the probability of a 2026 CapEx raise at TSMC’s 2Q26 earnings conference call in July appears to be increasing.

Bottom line: The latest TSMC approval data reinforces a critical industry theme: despite periodic fluctuations in quarterly headline numbers, leading-edge semiconductor investment remains in a structural expansion phase. AI demand is fundamentally altering semiconductor infrastructure requirements, and TSMC’s capital allocation patterns continue to reflect that reality. In fact, the latest BoD approvals may ultimately be remembered less for the sequential decline in total authorizations and more as an early signal that TSMC’s current 2026 CapEx framework is already becoming too conservative.

Also Read:

Dr. L.C. Lu on TSMC Advanced Technology Design Solutions

Dr. Y.J. Mii on TSMC Technology Leadership in 2026

Enabling Next-Generation AI Through Advanced Packaging and 3D Fabric Integration