Recent talk of AMD vs Intel market share share is misguided, the real race for superiority is TSMC vs Intel underlying that, tech dominance between US & China.

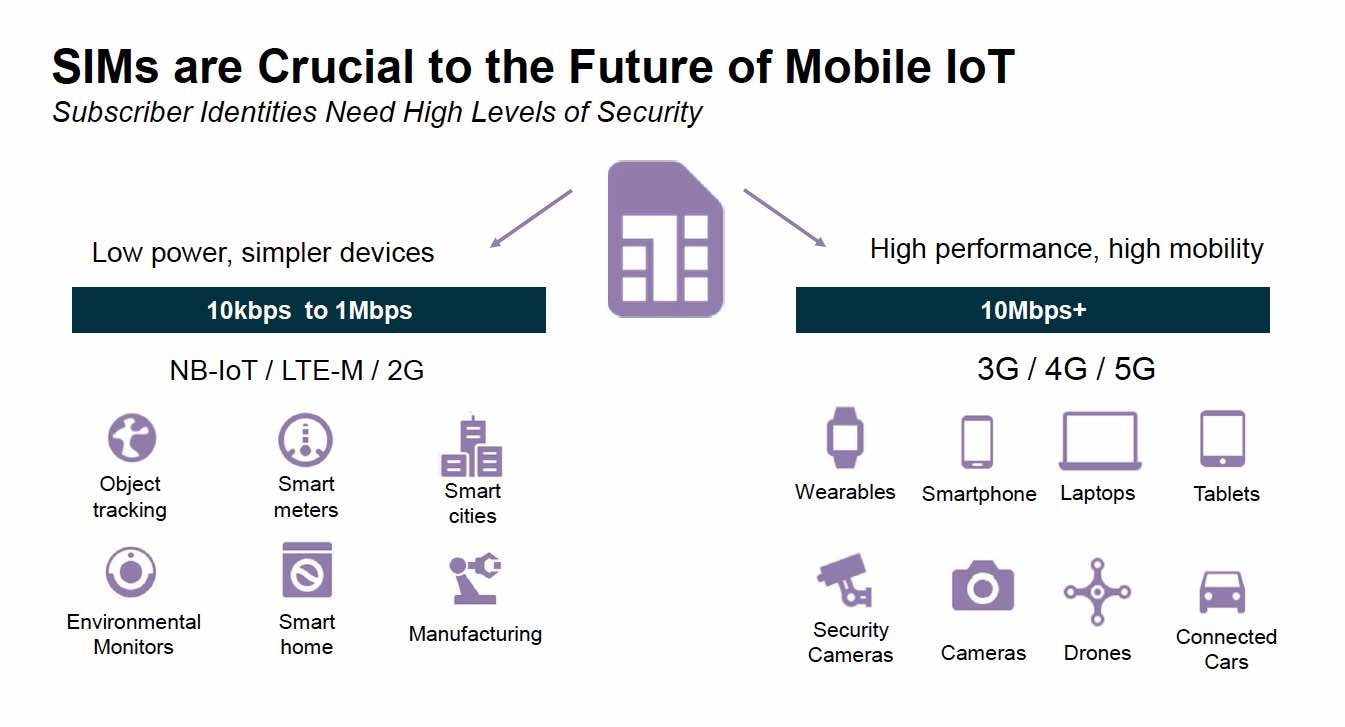

There has been much discussion of late about market share between Intel and AMD and how much market share AMD will gain at Intel’s expense due to Intel’s very late 10NM technology node. On the surface this may be the minor symptoms of a deeper conflict between Intel and TSMC and ultimately between the US and China for technology dominance.

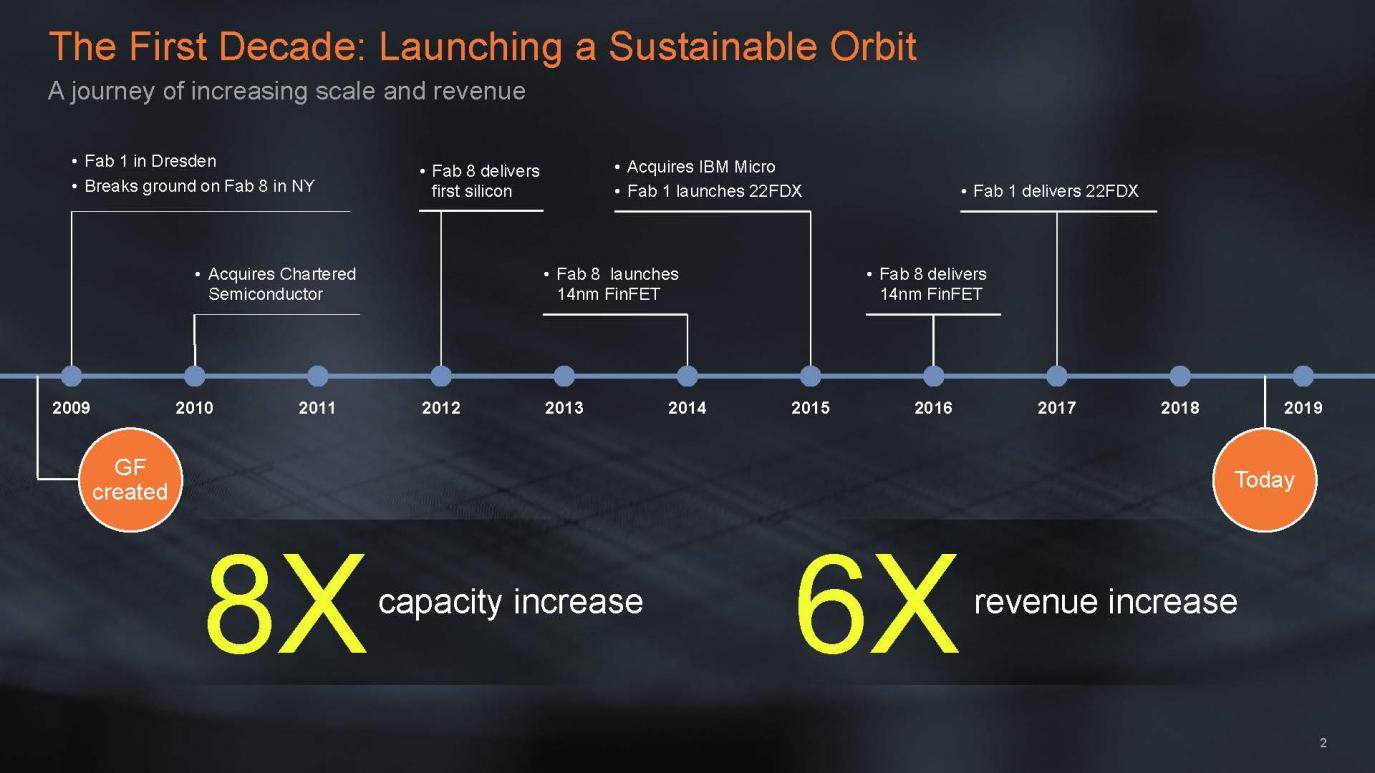

The real root cause of AMD’s resurgence also may not be only Intel’s stumble but Global Foundries stumble as well. GloFo’s stumble in ability to deliver to AMD allowed AMD to go outside of its relationship and hook up with TSMC which “leap frogged” it into a truly competitive position.

At some point in time , its not a question of if but rather when, TSMC will be subsumed by China (along with Taiwan..). This makes the AMD versus Intel story really all about technology dominance in the future between the US and China.

Right now TSMC (China) appears to be in the lead even though Intel (USA) appears to be finally recovering from its large 10NM stumble. TSMC long ago won the foundry wars as it makes Apple chips, communications and video chips. On the logic side, the only thing left for TSMC to win is CPU/PC/server chips which AMD will serve as the vehicle for.

Although it can be argued that Samsung is still the number one chip maker by dollar volume, that is obviously due to its dominance of memory as it doesn’t compare to TSMC in the more technologically important foundry market.

Although memory is also obviously extremely important in today’s data obsessed world, we think China can more easily copy both NAND and DRAM than logic in the future. There are a number of memory fabs in China already starting on that quest.

With GloFo (owned by Abu Dhabi) out of the race, Intel is the only real participant in the semiconductor race for the US. Intel in recent years has been focused on everything and anything not core semiconductor. Software, AI, AR, VR & drones… and of course Mobileye. One could argue that BK took his eye off the ball, distracted by the lure of new shiny toys. We would hope that Intel’s new Ceo return focus to its core technology heritage and doubles down on that focus.

Maybe Jerry Sanders (founder of AMD) quote “real men have fabs” is even more important today as the real “fab king”, TSMC, owns the foundry industry and is the barbarian at the gates to the CPU industry.

TSMC will make more money from AMD than AMD will

We have pointed out in previous articles that TSMC is the dominant partner in the relationship with AMD. AMD needs TSMC more than TSMC needs AMD. Now that the good ship GloFo has been burned to the ground by its 7NM abandonment, AMD has no escape from TSMC island (Samsung is not a viable rescue). TSMC can control AMD’s profit margins by controlling their chip supply costs. They can also control AMD’s market share by dropping costs to AMD to increase share versus Intel or raising costs to tighten the competition. TSMC is at the controls…

AMD is now largely a captive puppet of TSMC

What is the correct market valuation of a captive puppet? We had suggested in previous notes that AMD was getting overly expensive. The market appears to finally, belatedly, be figuring this out. AMD’s stock seems to have discounted huge share gains and profitability both of which were still in the further future.

We think analysts would be mistaken to assume that AMD’s supply costs from TSMC will fall sharply or even remain constant as TSMC ramps up supply. AMD’s model is not that of a fab owner with high fixed costs and marginal incremental costs. TSMC controls AMD’s financial model going forward.

The relationship between AMD and GloFo was much more even handed as both sides needed one another more equally than the one sided TSMC/AMD relationship.

Design versus Fabrication

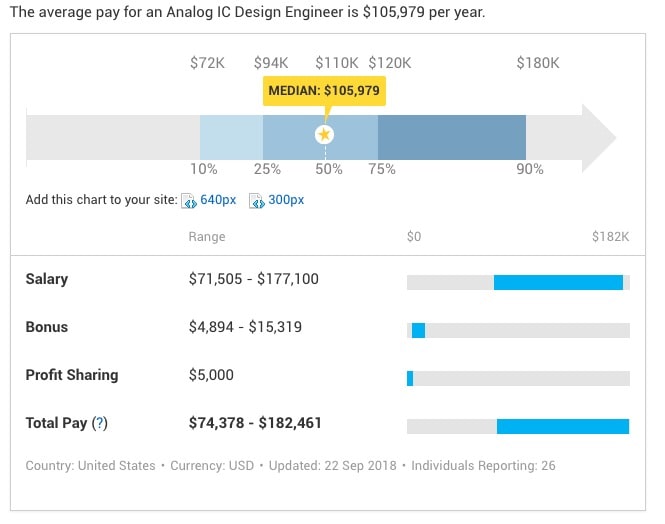

Although modern chip design is ever more critically complex and important than before, the fabrication of chips into silicon (Moore’s Law) has become exponentially more complex and has hit many more costly and technically complex barriers. Just compare the revenues of EDA companies versus chip tool companies . Look at the cost of building a fab or mask sets.

While AMD has great CPU designs and great video and AI capabilities, those designs have no value without the ability to fab them. TSMC brings that to the table and at a level equal to and better than Intel can.

At the end of the day, its the fab’s capability that wins the race….. a great design is worthless without a fab that can make it a reality…..

10NM finally out of the woods?

We were the first to break the news of Intel’s 10NM delay years ago. At the time we never would have imagined that the delay would be this long. This was far from normal as previously Intel’s “Tick Tock” strategy was as precise as a Swiss watch. Something happened, not clear what, but we think it was beyond just a technical barrier.

High on the rumor mill of causes of the delay are discussions of cobalts insertion into the manufacturing process. This sounds somewhat similar to the industries switch from aluminum to copper only a few orders of magnitude harder.

It is interesting to note that TSMC has not done cobalt yet so perhaps it has not suffered the same pain but may in the future. It is also interesting to note that GloFo was trying cobalt as well.

In any event it seems that Intel has “broken the code” and is now yielding better or at least enough to ramp production starting next year.

As most investors know, Intel’s 10NM and TSMC’s 7NM are rough equivalents in geometry and performance. Intel ramping in Q1 2019 would put them about 9 months behind TSMC’s leading edge which has already ramped.

It sounds like TSMC is already pushing hard at 5NM so Intel is going to have to go even harder to make up for lost time. So far TSMC has not gotten tripped up but it has yet to do cobalt and/or EUV. Perhaps if TSMC hits some hurdles Intel may have a chance to catch up but I would not count on it.

The US versus China in the fight for technology dominance

China has already proven to be a formidable competitor in software, Internet and AI etc;. It has a $100B checkbook to advance its semiconductor industry. So far they have made good (perhaps not great) progress. TSMC alone is a big enough prize in the technology race to justify getting even more aggressive on Taiwan. TSMC coupled with some memory fabs in the mainland would be a lethal combination way bigger than Samsung.

Maybe the US government will wake up and support the US semiconductor industry more. Maybe encourage Intel and Micron to merge. Maybe seek a buyer for GloFo Malta that will pick up the baton. Maybe put export restrictions on key US tool technology to delay China’s ascent.

So far, there is no such support from the government for the industry that holds the key to future technology dominance. There have been some very small import tariffs on Chinese goods related to semiconductors but nothing more.

Even though China is obviously no longer a communist country, we think a Lenin quote is appropriate; “The capitalists will sell us the rope with which to hang them,” In other words, we will continue to supply China with the technology that they will eventually dominate us with.

The Stocks

As we have previously suggested, we think AMD is overvalued as investors have stampeded into the stock on a good story without digging much deeper. While we think there is a lot of upside in AMD we think much of it is already in the stock and not a lot of room for any negative news.

As for Intel, ramping 10Nm is obviously good news but it won’t really impact things until at least Q1 2019 at best and realistically speaking Q2. In the mean time Intel still is still short of 14NM capacity that has caused it to perform unnatural acts to serve unexpected demand. It has also ticked up capex to try to help out but this also won’t fix the 14NM shortage for at least a couple of quarters as equipment has to roll in. This suggests that AMD will get some opportunistic share gains near term.

Semi Equipment Stocks – News remains negative…RTEC preannounces

As for the equipment companies, the news flow continues to be negative. RTEC pre-announced a roughly 10% shortfall in revenue that will cut EPS by a third or so.

The weakness for Rudolph appears broad based, not just Samsung and not just memory, and sounds like a fair number of tools have either slipped into next year or been canceled.

This also fits with our view of another 10% down leg for the quarter following on KLACs reset of Q4 expectations. The chip equipment flu which started with Samsung has spread to Micron, GloFo, TSMC and others. Intel seems to be the only chip company increasing capex near term but obviously not enough to make up for all the other cuts.

It has been a while in the chip equipment industry since we last had a negative pre-anouncement. Things have been that good for too long. RTEC’s pre-announcement underscores that we are in a standard cyclical downturn. At this point 2018 H2 is all downhill and the real question is when will we hit bottom (trough)? Q1 2019, Q2 2019 or further out?nIn looking at previous down cycles and current demand it feels a lot like a 3 or 4 quarter downturn.

We would not be surprised to see further negative news either in pre-announcements or quarterly reports as the weakness will show up in revenues and EPS.

We may have another leg down in the stocks. We are also seeing analysts downgrading the stocks, as we did earlier this week, as we get closer to a bottom. This obviously fits the “locking the barn door after the horse has bolted” as the stocks are way down from their peaks, but many bought into the one quarter air pocket fantasy.

Buckle your seat belts for a bumpy earnings season…..