Moti Margalit is the CEO and co-founder of SonicEdge, a deep-tech pioneer reinventing sound through ultrasonic modulation – unlocking smaller, vibration-free speakers with studio-quality audio.

With a background in lasers and electro-optics, Moti transitioned from technologist to inventor. His career spans 150+ patents and a pivotal stint at Intellectual Ventures, where he learned to turn scientific breakthroughs into market-defining products.

A problem-solver with an entrepreneurial spirit, Moti was inspired to create SonicEdge when a conversation about hearing aids revealed the limitations of traditional audio tech. Today, he is on a mission to make SonicEdge as transformative for sound as LEDs were for light by scaling its tech to power the future of immersive, personalized audio.

Tell us about your company

For 150 years, speakers have worked the same way – a coil, a magnet, and a moving diaphragm. SonicEdge is changing that. Our patented modulated ultrasound technology represents a fundamental paradigm shift in how sound is generated: solid-state MEMS micro-membranes replace all moving mechanical parts, producing an ultrasonic carrier that is actively modulated into full-range audible sound. This is the architecture that gives AI glasses a voice, makes truly invisible hearables possible, and brings studio-

quality audio to form factors that legacy drivers simply cannot reach.

Our chip-scale speakers measure 6.5×4×1mm – delivering more output per milliwatt and per cubic millimeter than conventional drivers at this scale. With 28+ patents, a signed development contract with a leading AI glasses company, and a chip integration partnership with a leading global semiconductor manufacturer announced at CES 2026, SonicEdge is building the audio foundation for the next generation of wearables.

What problems are you solving?

The consumer electronics industry is caught in a contradiction: devices are getting smaller and smarter, but the speaker inside them is still a mechanical component engineered in the early 20th century. Voice-coil drivers are bulky, power-hungry at small scales, and impose hard physical limits on how thin and light a wearable can be.

Others have tried to solve this with MEMS, and hit a fundamental wall. Silicon

membranes moving air directly at audio frequencies produce limited output, yielding narrow-band, low-efficiency tweeters that cannot replace a full-range driver. The physics simply don’t scale.

SonicEdge’s breakthrough was to ask a different question entirely. Rather than moving air at audio frequencies, our MEMS membranes vibrate at 400kHz – acting as the world’s smallest air pump, far above the threshold of human hearing. That ultrasonic carrier, invented and patented by SonicEdge, is then amplitude-modulated to produce audible sound across a 2Hz–40kHz range – wider than any conventional driver at this scale. The speaker die measures 6.5×4×1mm, contains no voice coil or permanent magnet, and fabricates on standard MEMS processes. Unlike piezoelectric MEMS tweeters, this delivers full-range output from a solid-state chip – the first of its kind designed for high-volume consumer wearables.

What application areas are your strongest?

SonicEdge’s strongest application areas are those where the speaker has historically been the hardest component to fit – where industrial design, comfort, and miniaturization leave no room for the mechanical compromises of legacy drivers.

In smart glasses and AI glasses, the challenge is extreme: a speaker must live

inside a temple arm that is millimeters thin, yet deliver clear, directional audio all day. This is precisely the use case SonicEdge’s modulated ultrasound technology was built for, and it is already being designed into leading AI glasses platforms.

True wireless stereo (TWS) earbuds and open-wear speakers (OWS)

represent the highest-volume opportunity. As the market shifts toward open-ear designs that sit lightly on or around the ear rather than sealing the canal, output efficiency and form factor become critical – both areas where SonicEdge’s solid- state architecture outperforms conventional drivers.

Hearing aids demand the most stringent combination of miniaturization, power efficiency, and audio fidelity of any consumer device. SonicEdge’s modulated ultrasound speaker is a natural fit for next-generation OTC and prescription devices pushing toward near-invisible form factors.

Smartwatches and wearable devices increasingly require embedded audio for

notifications, voice assistants, and health alerts – in housings where a

conventional speaker is simply too thick and too power-hungry to integrate

cleanly.

Smartphones and mobile devices represent a longer-term but significant

opportunity: as handsets continue to thin and under-display designs leave less

internal volume, a solid-state speaker that fabricates like a semiconductor

becomes an attractive alternative to the last remaining mechanical component in the device.

Beyond audio, SonicEdge’s ultrasonic pump technology opens doors into adjacent applications entirely. The same 400kHz MEMS air-pumping mechanism that generates sound can be redirected toward active chip cooling, a growing challenge in AI-edge and wearable processors, as well as gas sensing, micro-fluidics, and other applications where a solid-state, chip-scale actuator operating at ultrasonic frequencies offers capabilities no mechanical device can match.

SonicEdge’s modulated ultrasound MEMS technology – invented and patented by SonicEdge – has been designed into AI glasses, TWS earbuds, open-wear speakers, hearing aids, smartwatches, and smartphones. The same ultrasonic MEMS pump architecture that generates full-range audio from 2Hz to 40kHz is also applicable to active chip cooling, gas sensing, and micro-fluidic actuation – all from a solid-state, semiconductor-fabricated die with no moving mechanical parts. SonicEdge holds 28+ patents covering modulated ultrasound audio, MEMS transducer architecture, and ultrasonic pump applications across consumer electronics and industrial sensing.

What keeps your customers up at night?

The companies building the next generation of wearables and hearables are navigating a market that is simultaneously saturating and accelerating. Differentiation is getting harder. Consumers expect devices that are lighter, thinner, and more capable with every product cycle — and the gap between what industrial designers want to build and what legacy components allow them to build is widening.

At the component level, the speaker remains the most stubborn constraint. It is the one part of the device that hasn’t fundamentally changed – still mechanical, still bulky relative to everything around it, still a ceiling on how slim a frame can be or how long a battery can last. For product teams designing AI glasses or next-generation earbuds, the audio subsystem is often the last thing to get resolved and the first thing that forces a design

compromise.

Alongside form factor pressure, there is a deeper anxiety about platform lock-in. As AI becomes the dominant interface for wearables – with always-on voice assistants, real-time translation, and health monitoring – audio quality and latency are no longer just product features. They are the user experience. Getting the acoustic foundation wrong at this stage means building on a constraint that compounds with every subsequent generation.

SonicEdge helps its customers bridge those constraints – and in doing so, gives them back the freedom to put the magic back into audio.

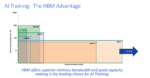

What does the competitive landscape look like, and how do you differentiate?

The competitive landscape for miniaturized audio breaks into three tiers. First, the legacy dynamic driver manufacturers, the companies with decades of mechanical refinement behind them, who are reaching the physical limits of how small and efficient a voice-coil driver can be made. Second, a wave of MEMS speaker startups attempting to miniaturize conventional speaker physics onto silicon – most of whom have produced capable tweeters but have not solved the full-range output problem. Third, the semiconductor giants who supply the audio chipsets that sit behind all of these drivers, and who are increasingly looking for a next-generation transducer technology to integrate.

SonicEdge competes in none of these tiers in the conventional sense, because

modulated ultrasound is not a refinement of existing speaker technology, it is a replacement for it. Where others optimize within the constraints of legacy acoustic physics, SonicEdge operates outside them entirely. The differentiation is not incremental, it is architectural.

That distinction is increasingly recognized at the highest levels of the industry.

SonicEdge holds a signed development contract with a leading AI glasses company, and at CES 2026 announced a chip integration partnership with a leading global semiconductor manufacturer – embedding SonicEdge’s modulated ultrasound IP directly into next-generation audio chipsets. When the semiconductor layer adopts your architecture, the competitive conversation changes fundamentally.

The MEMS speaker competitive landscape includes legacy dynamic driver

manufacturers, piezoelectric MEMS tweeter companies, and emerging solid-state audio

startups. SonicEdge is the only company to have developed and patented a full-range solid-state speaker based on modulated ultrasound – a technology category it created.

Unlike piezoelectric MEMS speakers, which produce limited-bandwidth output

unsuitable for full-range audio, SonicEdge’s modulated ultrasound architecture delivers 2Hz–40kHz response from a 6.5×4×1mm die with no voice coil, no permanent magnet, and no mechanical moving parts. SonicEdge’s modulated ultrasound IP is being integrated directly into next-generation audio chipsets by a leading global semiconductor manufacturer.

What new features/technology are you working on?

SonicEdge’s development roadmap in 2026 is focused on three fronts: deepening silicon integration, expanding the SonicTwin platform, and scaling array-based acoustic architectures.

On the silicon front, the CES 2026 announcement of our chip integration partnership with a leading global semiconductor manufacturer marks a structural shift in how SonicEdge’s technology reaches the market. By embedding modulated ultrasound IP directly into next-generation audio chipsets, SonicEdge removes the last integration barrier for OEMs — the technology becomes part of the semiconductor stack, not an add-on component. This is the path to high-volume deployment across the full range of wearable and hearable platforms.

The SonicTwin module, the smallest integrated speaker-microphone solution currently on the market,is being extended in 2026 to support advanced environmental awareness, health monitoring, and ultra-low-latency audio. Co-packaging MEMS speakers with microphones in a single module enables tighter acoustic feedback loops, improved active noise cancellation, and the always-on audio intelligence that next-generation AI wearables demand.

Further sensor integration is on the roadmap – bringing biometric and environmental sensing into the same compact acoustic package.

Array-based solutions represent one of the most compelling frontiers. By arranging multiple MEMS transducers in controlled configurations, SonicEdge can deliver precisely steered acoustic beams – enabling private listening experiences where sound reaches only the intended listener, and dramatically reducing audio leakage and noise pollution in shared or public spaces. This beamforming capability, native to the solid- state MEMS architecture, opens new dimensions of both user privacy and acoustic design freedom that no conventional speaker array can match.

How do customers normally engage with your company?

SonicEdge serves two distinct tiers of customer engagement.

For product teams and smaller OEMs, the path to integration is straightforward: production-ready MEMS speaker modules and SonicTwin speaker-microphone units are available for direct evaluation and design-in.

These customers get access to a fully characterized, semiconductor-fabricated acoustic component that drops into their design process with minimal friction and no custom development required.

For strategic partners, the engagement runs deeper. A product team facing a specific acoustic constraint that conventional components cannot resolve will find in SonicEdge a technical collaborator, not just a supplier. SonicEdge’s engineers work alongside partner teams to optimize the modulated ultrasound architecture for their specific form factor, power envelope, and acoustic targets – co-defining what becomes possible rather than simply substituting one component for another. As silicon integration matures

through the semiconductor chipset partnership announced at CES 2026, this strategic model will scale further by bringing SonicEdge’s technology to a broader set of OEMs through the established supply chains of a global semiconductor manufacturer, while preserving the depth of technical partnership that has defined the company’s most significant relationships to date.

Also Read:

CEO Interview with Dr. Mohammad Rastegari of Elastix.AI