There have always been novel technologies vying to compete with conventional design practices. It is hit or miss on the success of these ideas. In the 90’s I recall speaking to someone who was convinced that they could effectively build computers based on multilevel logic. This, as we know did not pan out. But there have been many more ideas that have been partially or fully successful.

Years ago Intrinsity was formed by the former Motorola PPC design team to commercialize their dynamic logic based design approach. They could achieve impressive performance/mW numbers, but it was only suitable for full custom designs and was a challenge to work in their design specification language. They did some work with Microsoft on one of the early X-boxes. They had a tough time selling their solution to a more general market. However, they scored a major win when the design team was acquired by Apple. These are probably the same folks that are building your A9 chips.

One area of consistent effort is clocking schemes. One such effort that seems to have stalled out is resonant clocks. The start up Cyclos produced some chips with AMD, but making it commercially viable proved difficult for them. They boasted 4GHz clock speeds, but this is a clock speed achievable using conventional clock trees, so the benefit may not have justified the added effort.

Azuro is well known for shaking up the CTS market. Before they came along, the big guys probably had one or two part-time developers working on improving CTS. With their dramatic improvements in area and power, they signed some big deals with major chip companies and were eventually acquired by Cadence.

What a lot of people do not know is that their CEO Paul Cunningham originally wanted to develop a commercial solution for clockless design. However, after significant effort they abandoned that and focused on improving the existing CTS methodology. Clockless design still remains the holy grail of power-performance improvement. Of course it would also be a win in PVT in general as it would handle variation much better.

Wave Semiconductorhas developed an approach for implementing digital designs using a clockless approach called Wave Threshold Logic (WTL). To make it work they needed to change some fundamental ways circuits are designed, but claim to be able to make equivalent functionality, running much faster with a minimal area penalty.

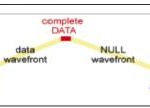

They needed to use 2 wires per signal, to convey 0, 1, NOT_DATA, and an unused/illegal state. An increase in signal lines is not an excessive penalty for eliminating an entire clock tree and all its signal wires, buffer and and registers. But they also boast a power consumption win from the elimination of glitching transitions. The only transitions in their logic are true data transitions. When logic changes it is because of necessary switching. They also had to invent a new type of gate – one that switches based on the sum of the number of its input that are at logic 1.

Using this new gate and backward branching lines to coordinate logic readiness, they can implement the equivalent of any Boolean logic gate and pipelines of arbitrary depth. Arguably this approach still suffers from the problem Intrinsity had, which is the lack of a direct translation from RTL to their gates. However, Wave Semiconductor can easily reach data speeds of 8 to 12 GHZ. I am sure the lack of a gate level compiler had held back their business progression. Now It seems they have found a path that will provide them a bigger TAM.

Given how much bandwidth they have available, they appear to have decided to move up the abstraction chain and build a fabric of 8 bit processors and a way of converting high level design specifications into configurations of the fabric. Their website even talks about dynamic reconfiguration during chip operation. While this sounds similar to what an FPGA might offer, FPGA’s still have the limitations of a clocked architecture and cannot compete with ASIC’s and SOC’s on performance/area/power.

Wave’s fabric running at ~10GHz could implement a programmable approach that is fast enough to be the finished chip. In their current offering they have licensed NOC technology from SONICS, and are including a CPU and other IP blocks to round out the functionality. By going with a commercial product that could go to volume they have solved one of the big problems other novel clocking scheme designs have struggled with. If they can make the supporting software and the design specification process straightforward enough, they could be successful in an area where many others have struggled.

I think there might be an interesting back story on how their ‘brilliant’ idea was molded into something that can be marketed effectively to compete with the traditional solutions. After all, there have been a lot of clever ideas that did not quite pan out because there was not a good enough fit with the design needs of the market. One need not look any further than companies like Cyclos, Tabula or Intrinsity for good examples.