Tell us a little bit about yourself and your company.

I founded TekStart Group in Ontario, Canada, in 1998 with a very clear objective: to help innovators turn breakthrough technology concepts into real, market-ready products. Over the past 25-plus years, we have worked across the full lifecycle of technology development, from early concept and architecture through funding, commercialization, and exit.

To date, TekStart has helped develop, fund, and successfully exit more than 120 companies. That experience has given us a deep appreciation for what it really takes to bring complex technologies to market, especially in semiconductors and systems where timelines are long and execution risk is high.

Today, I am most excited about the transformation underway as AI inferencing moves out of centralized data centers and into edge devices. We are seeing a fundamental shift in how intelligence is deployed, and that shift creates both technical and economic opportunities that simply did not exist a few years ago.

What was the most exciting high point of 2025 for your company?

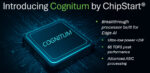

Without question, the most exciting milestone for us in 2025 has been reaching the final stages of tapeout for our latest semiconductor product, Cognitum. This has been a five-year journey, and like most deep-tech efforts, it has involved plenty of unexpected turns along the way.

Bringing a new AI-focused chip to market is never straightforward. There were moments where progress felt incremental and others where challenges stacked up quickly. Still, seeing Cognitum reach this level of maturity has been extremely rewarding. If I ever do write a book, this program would certainly deserve a few chapters.

As we approach launch, the excitement comes not just from completing the silicon but from seeing how customers are already thinking about deploying it to solve real problems at the edge.

What was the biggest challenge your company faced in 2025?

The honest answer is that the biggest challenge in 2025 was everything at once. If there was a category of delay or disruption you could imagine, we likely encountered it.

Market uncertainty created constant pressure on planning and forecasting. We worked closely with customers whose own roadmaps were being affected by factors well beyond their control. On top of that, new tariffs introduced additional complexity around cost structures, supply chains, and contract negotiations.

Each issue on its own would have been manageable. Experiencing them simultaneously required continuous adjustment, clear communication, and a willingness to revisit assumptions more often than usual.

How is your company’s work addressing this challenge?

What I am most proud of is the resilience our team demonstrated throughout the year. There were moments when obstacles felt genuinely insurmountable, yet the team stayed focused on execution and problem-solving rather than distraction.

We concentrated on what we could control: engineering discipline, customer transparency, and forward momentum. That mindset allowed us to navigate uncertainty while continuing to move Cognitum toward final tapeout.

In semiconductors, persistence matters. Staying aligned as a team and maintaining confidence in the long-term vision is often the difference between programs that stall and programs that succeed.

What do you think the biggest growth area for 2026 will be, and why?

I firmly believe that Edge AI will be one of the most important growth areas in 2026. For several years, the industry’s focus has been heavily weighted toward cloud-based large language models and massive data center build-outs. While those investments are necessary, they do not fully address the needs of real-world autonomous systems.

There is a growing gap between what cloud LLMs are optimized for and what edge systems actually require. Latency, bandwidth, cost, power consumption, and data privacy all become critical constraints outside the data center.

In 2026, we will see greater emphasis on pushing intelligence closer to where data is generated, enabling faster, more predictable, and more economical decision-making at the edge.

How is your company’s work addressing this growth?

The demand for edge reasoning is expanding rapidly across robotics, industrial automation, infrastructure, IoT, and enterprise systems, where privacy and determinism are critical. These applications increasingly require local decision-making, yet cannot tolerate the latency, recurring costs, or lack of transparency associated with cloud-based inference.

Cognitum is designed specifically to address this gap. It enables a new class of low-cost devices capable of autonomous reasoning directly on the chip. By moving inference to the edge, customers can significantly reduce or eliminate recurring cloud inference costs.

This changes the economic model from per-token or usage-based billing to a fixed hardware cost, making large-scale deployments practical in scenarios that were previously uneconomical or operationally constrained.

What conferences did you attend in 2025, and how was the traffic?

We sponsored and attended the AI Infra Summit in September 2025, and the difference compared to the prior year was striking. Attendance was significantly higher, and the level of engagement was much stronger.

We saw more informed conversations, more qualified prospects, and a clearer understanding of why edge AI infrastructure matters. Being featured in a success story at the event was also valuable, as it allowed us to share our journey and lessons learned with a broader audience.

Will you participate in conferences in 2026? Same or more than 2025

Conferences will continue to play an important role in our marketing and business development strategy. While digital engagement is effective, there is still no substitute for in-person conversations when discussing complex technologies.

We will kick off 2026 at CES and expect to maintain, if not increase, our level of conference participation throughout the year. The quality of interaction we see at these events continues to justify the investment.

How do customers normally engage with your company?

Our business is highly specialized, and we intentionally focus on a narrow, well-defined customer segment. We have served this market for many years and have built a reputation as a trusted partner rather than a transactional vendor.

That trust works to our advantage as we introduce new products. Being a known entity means customers are already familiar with our approach and capabilities. As a result, maintaining awareness, sharing meaningful updates, and staying engaged with key stakeholders remains one of the most effective ways we work with our customers.

Additional comments?

The world has become a more complex and challenging place, both personally and professionally. Technology continues to advance at an accelerating pace, and it can be difficult to keep up with everything that is changing.

That makes it even more important for technology providers to be thoughtful and deliberate about what they build and how those technologies are brought to market. The decisions made over the next few years are likely to have an impact that extends far beyond the near term.

If we approach this moment with care and responsibility, the opportunities ahead are significant. How we move forward matters.

Also Read:

CEO Interview with Rabin Sugumar of Akeana

Acceleration of Complex RISC-V Processor Verification Using Test Generation Integrated with Hardware Emulation

Quantum Computers: Are We There Yet?