You might be right:Thanks for the articles -- the first link was really interesting with how it's sorta not parallel when training but you do get benefits of multiple GPUs. The extra bandwidth of NVLink makes a lot of sense for this.

I think today the only PCIe 5.0 GPUs available are the new Blackwell professional GPUs, and the future 50 series?

What GPU has PCIe 5.0? Do any graphics cards support PCIe 5 in 2024?

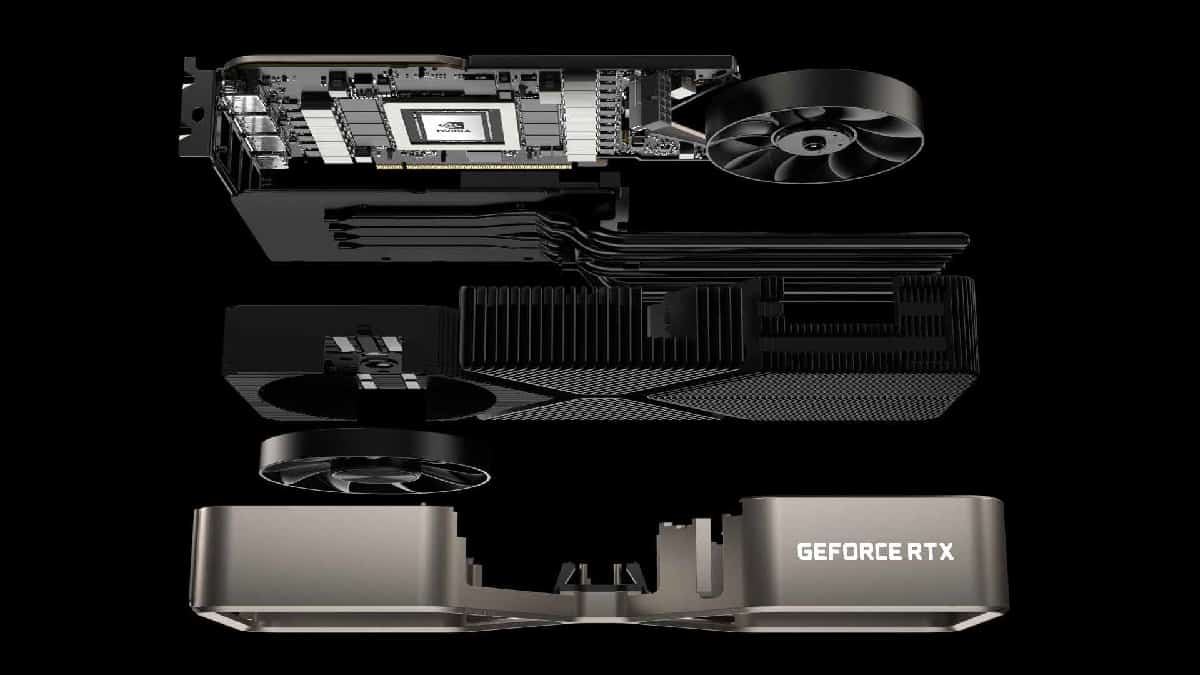

There are no mainstream GPUs that support PCIe 5.0 - however we think they could be just on the horizon. Here's what you need to know.