Though terminology sometimes get fuzzy, consensus holds that an agent manages a bounded task with control through a natural language interface. An agentic orchestrator, itself an agent, manages a more complex objective requiring reasoning through multi-step actions and is responsible for orchestrating those actions. By way of example, setting up an individual simulation might be handled by an agent. An orchestrator could manage setting up multiple scenarios for running simulation, launching those runs, debugging detected failures and summarizing results. More hands-free automation, at least in principle, freeing engineers to explore a wider range of optimizations and analyses.

A concrete example

These days I’m very much into specific instances of AI benefits rather than abstract potential benefits. Amit Gupta (chief AI strategy officer, senior vice president and general manager, Siemens EDA) shared a nice example, close to his prior roots in founding and growing Solido.

A designer wants to fully characterize a logic function across all relevant process corners. They have Liberty files for some voltage and temperature corners, encoded in the file names, but not all. The orchestrator figures out what is missing and sets up tasks for a generator agent to build the missing files, here leveraging Solido ML-based technology.

Next the orchestrator triggers validation agents to check the generated files, looking for potential inconsistencies or unphysical behavior. Where problems are found the orchestrator can trigger repair agents, then re-run validation. This may resolve most errors, perhaps leaving only one or two files that must be corrected manually.

Contrast this with the effort a designer must invest today: figuring out which files are missing, setting up Solido runs to build those files, checking each and manually repairing errors, then re-running validation. Each step well within the capabilities of the designer but together a lot of administrative overhead, burning expert designer cycles that could be better invested elsewhere. Agentic systems automate that overhead, directed by natural language setup. Not replacing the developer but making them more productive.

A challenge

In the example above, all the EDA technologies managed by agents are from Siemens EDA. How does an agentic flow work when tools you might want to include in an agentic task are provided by competing EDA vendors? In my earlier example of setting up multiple simulation scenarios, running, then debugging, maybe your approved simulator is from one vendor whereas your preferred debugger is from another. How well can an orchestrator work in a mixed vendor flow?

This isn’t a question of data compatibility. Most EDA tools support industry approved or de facto standards. The issue is that effective agents need deep insight into how to control tools, for which there no standards (in fact standards here would stifle innovation). An agent must understand not only internal controls within a tool but also the intent behind those controls. In the Fuse Agentic flow this insight is enabled by a RAG pipeline.

I have written before about RAG (retrieval augmented generation) and its evolution to higher accuracy. First generation RAG, as in early chatbots, digested natural language text from any source and then could answer question prompts on any topic covered in that area. Pretty slick except that while often impressive, answers were sometimes spectacularly wrong. When you (a human) read the retrieved text, this isn’t too damaging – you quickly spot the mistake. But it is a big problem when an agent is relying on the accuracy of retrieval.

Now there is a concept of advanced RAG, which itself depends on agentic methods to improve accuracy in retrieval through reasoning, self-reflection and verification. Accuracy goes up significantly, as you have probably noticed in recent AI responses accompanying Google searches. Newer methods continue to advance but the important point here is that we can have more confidence in document scraping, which equally can drive more accurate agentic behavior around a tool (though still not perfect; still requiting that we trust but verify, as in all things AI).

Which leads back to how to integrate a “foreign” tool into an agentic flow. A design team or the tool providers can build a model by scraping tool documentation, and that model can then be integrated under the Fuse EDA AI agent.

More on the Fuse EDA AI Agent

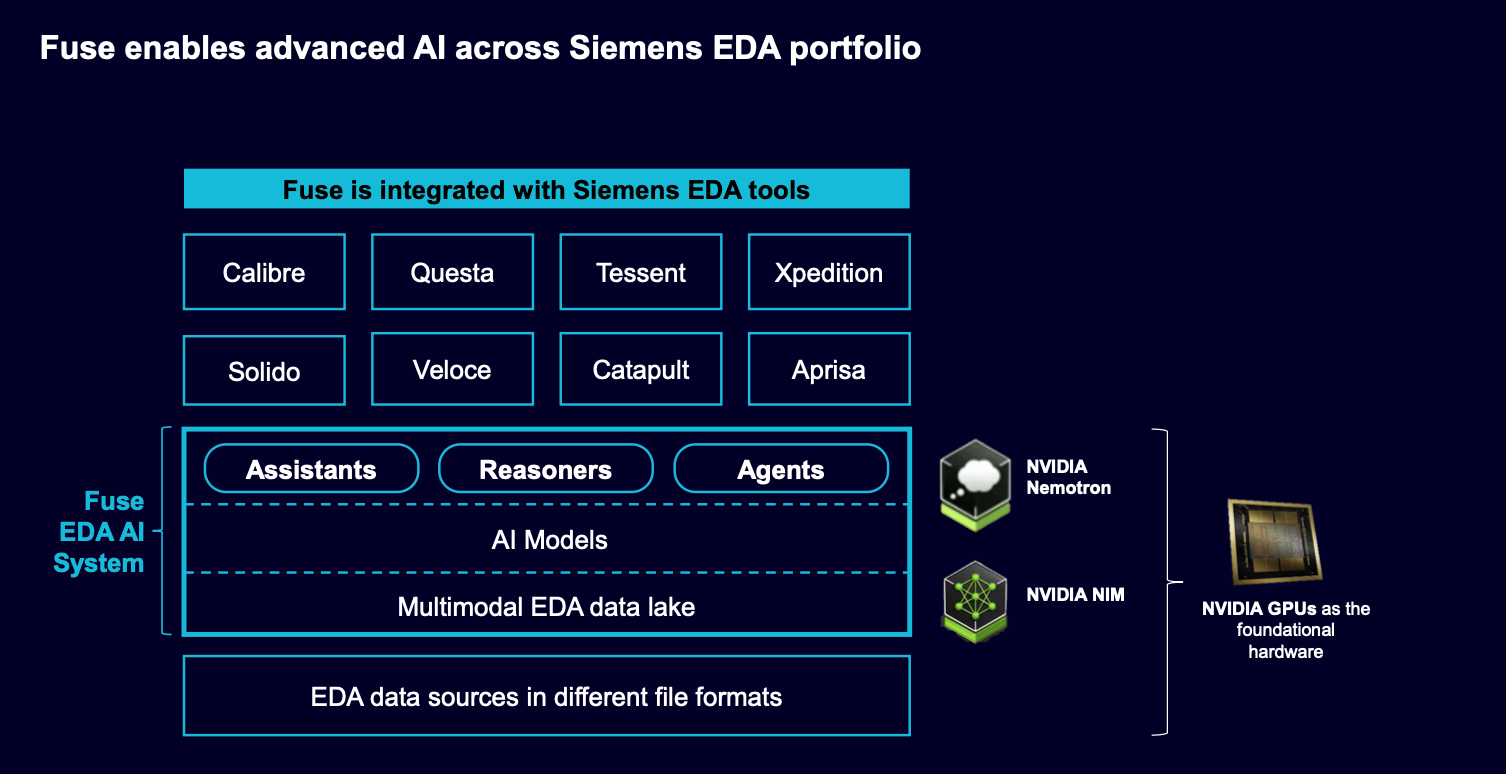

Fuse can plan and orchestrate across the full range of Siemens EDA tools, from front to back in IC and PCB circuit design, implementation, verification and manufacturing sign-off. For industry-standard agentic compatibility, Fuse supports MCP interfaces, the NVIDIA Agent Toolkit, Nemotron models and the NVIDIA AI infrastructure for enhanced tool calling and reasoning capabilities.

Fuse builds on an advanced RAG pipeline and a multimodal EDA-specific data lake spanning RTL, simulation, implementation, test and more.

Siemens already boasts endorsements from Samsung (Jung Yun Choi, executive vice president of Memory Design Technology, Samsung Electronics) and NVIDIA (Kari Briski, vice president of generative AI, NVIDIA).

The product debuted at NVIDIA GTC 2026 and is available today. You can learn more HERE.

Also Read:

Siemens Wins Best in Show Award at Chiplet Summit and Targets Broad 3D IC Design Enablement

Siemens Fuse EDA AI Agent Releases to Orchestrate Agentic Semiconductor and PCB Design

Accelerating Computational Lithography Using Massively Parallel GPU Rasterizer

Share this post via:

Comments

There are no comments yet.

You must register or log in to view/post comments.