You may think that quantum theory is fully understood but that view is not quite right. There remain open questions around the uncertainty principle, wave-particle duality, measurement collapse, and harmonizing quantum mechanics and gravitation. These concerns may seem very abstract and irrelevant to everyday applications but together promote a lingering sense that we still don’t fully understand quantum mechanics. Efforts to correct our understanding have been with us for over 100 years, starting with Einstein, Born and many others.

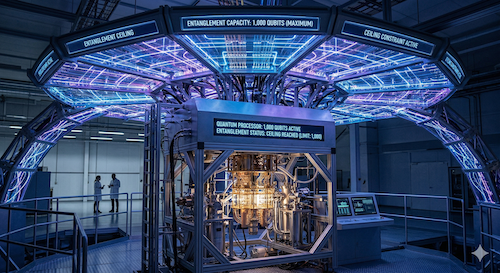

One such proposal in a recent paper could have rather dramatic consequences for quantum computing. The method used to address gaps in our understanding suggests that there might be a theoretical upper bound to the number of qubits that can be usefully superposed and/or entangled at any one time. If true, industrial cryptography may never be cracked by a quantum computer. The paper is available from the Proceedings of the National Academy of Sciences, though this is an easier read. Below is my attempt to abstract the key ideas. Apologies up-front – this is a geeky blog.

Clarification

The paper doesn’t suggest a limit on the number of qubits we can stuff into a quantum computer. That number might be practically bounded but so far is not theoretically bounded. The limit proposed in this paper is on how large a set of interdependent qubits is possible in a quantum algorithm.

Superposition/entanglement is table stakes for any useful quantum algorithm. You may be able to compute with a larger number of qubits, but not any faster than a classical computer if you don’t use superposition or entanglement. Interdependence between qubits is fundamental to quantum advantage.

Rethinking space

There is a widely held (not universal) view in physics that to unify quantum theory and general relativity we must switch from continuous space representations to a view that space is not arbitrarily divisible. There are physical limits (Planck length) on continuity, also uncertainty and wave-particle duality start to look more reasonable in discrete space. Further, in quantum computing an information-theoretic view of qubit states is appealing following Shannon, and this too works most comfortably in discrete space.

An N-qubit vector should in principle be able to address any arbitrary state in the full possible state space of that vector. Remember qubit states are slightly more involved than regular bit states. A bit can be 0 or 1. A qubit can be ⍺|0> + β|1> where |0> and |1> are “pure” quantum states like spin-up and spin-down and ⍺, β are complex phases such that |⍺|2 + |β|2 = 1. In continuous space quantum theory this formulation can represent any possible state in the full state space of a qubit, similarly an N-qubit vector could represent any state in that N-qubit space. However, if space is discretized in some manner, this guarantee can no longer be provided according to the paper, and there is an upper limit to how many states can be addressed by an N-qubit vector.

Since discretized, each qubit ⍺ and β will have a finite range of possible values. Extending to an N-qubit vector there will be similarly be a finite bound on how many states can be encoded within the state space. Less obviously, there will be in-principle “reachable” states in the state space which fail to meet discretization rules, and therefore are undefined/unreachable. As you add qubits, state space expands exponentially as do unreachable states, and quantum advantage begins to tail off. The author suggests that the absolute upper limit N for which a qubit vector could effectively address only legal states is 1000 qubits and that practical limits could be even lower.

This 1,000-qubit limit is well below any qubit count I have seen suggested (>104) for Shor or beyond-Shor algorithms applied to RSA 2048. If the theory is correct, this is a significant limitation. Many useful applications may still be possible, especially in quantum chemistry and materials science, but problem sizes will be much more constrained than we have been led to believe.

It’s just a theory

True, although the theory proposes intriguing resolutions to several of the open quantum questions I mentioned earlier. The paper suggests a practical test for a limit, which should be possible within the next 5-10 years. Simply run Shor’s algorithm attempting to factor a large integer on an N-qubit machine (logical qubits). If the concept behind the algorithm holds, performance should saturate to classical performance beyond some threshold for N. The paper suggests saturation may start as low as 500 qubits. If quantum advantage disappears or starts to disappear around this point, we will have hit a fundamental barrier in quantum computing. If not, then the theorists must go back to the drawing board.

Incidentally, the author illustrates his reasoning using rational number discretization, though stresses that his primary conclusion should hold (if correct) independent of that choice.

I will be interested to hear what QM builders and theorists have to say about this.

Also Read:

Another Quantum Topic: Quantum Communication

PQShield on Preparing for Q-Day

Where is Quantum Error Correction Headed Next?

Share this post via:

Musk’s Orbital Compute Vision: TERAFAB and the End of the Terrestrial Data Center