RISC-V has momentum. The industry knows it. The harder question is: who can actually deliver when and where it matters?

A Shift That Changes the Stakes

On March 24, 2026, Arm made something explicit: it is now a silicon company. After decades as a neutral IP provider, Arm is moving up the stack. It’s building chips and complete solutions, not just licensing architectures.

This is a fundamental shift. Arm’s value was rooted in being a trusted, independent processor and system IP provider enabling an ecosystem that allowed its customers to build differentiated SoCs. That dynamic is changing. Even if gradual, the implication is clear: Arm is moving closer to its customers’ end markets, and in some cases, competing with them. Moments like this trigger strategic reassessment.

RISC-V Moves to Center Stage

In that context, RISC-V is no longer peripheral to the conversation.

What was once seen as promising but fragmented has matured. With RVA23, the ecosystem now has a defined baseline for high-performance, general-purpose compute. Operating systems such as Linux can target a consistent profile, removing a major barrier to adoption.

RISC-V is no longer just an ISA. It is becoming a platform.

An Active but Uneven Market

Progress is real. Silicon exists. Teams are building. Some deployments are already in production.

But the landscape remains unsettled. Companies have exited, been acquired, or shifted to internal use. Many others remain focused on embedded or domain-specific markets.

The result is a fragmented picture: high activity, uneven maturity, and limited clarity on who is building for high-performance, general-purpose compute. Who is actually building deployable platforms for the open market?

The Gap Between Talking and Shipping

The constraint is no longer architecture but rather execution.

High-performance compute requires more than a core. It requires a complete system: out-of-order CPUs, coherent memory, system IP, high-speed IO, and a usable software stack. And most importantly, it requires real silicon on which real workloads can be run, measured and benchmarked.

This is where ambition meets reality.

From IP to Systems: Akeana’s Approach

A small number of companies are addressing this gap directly. Akeana is one of them.

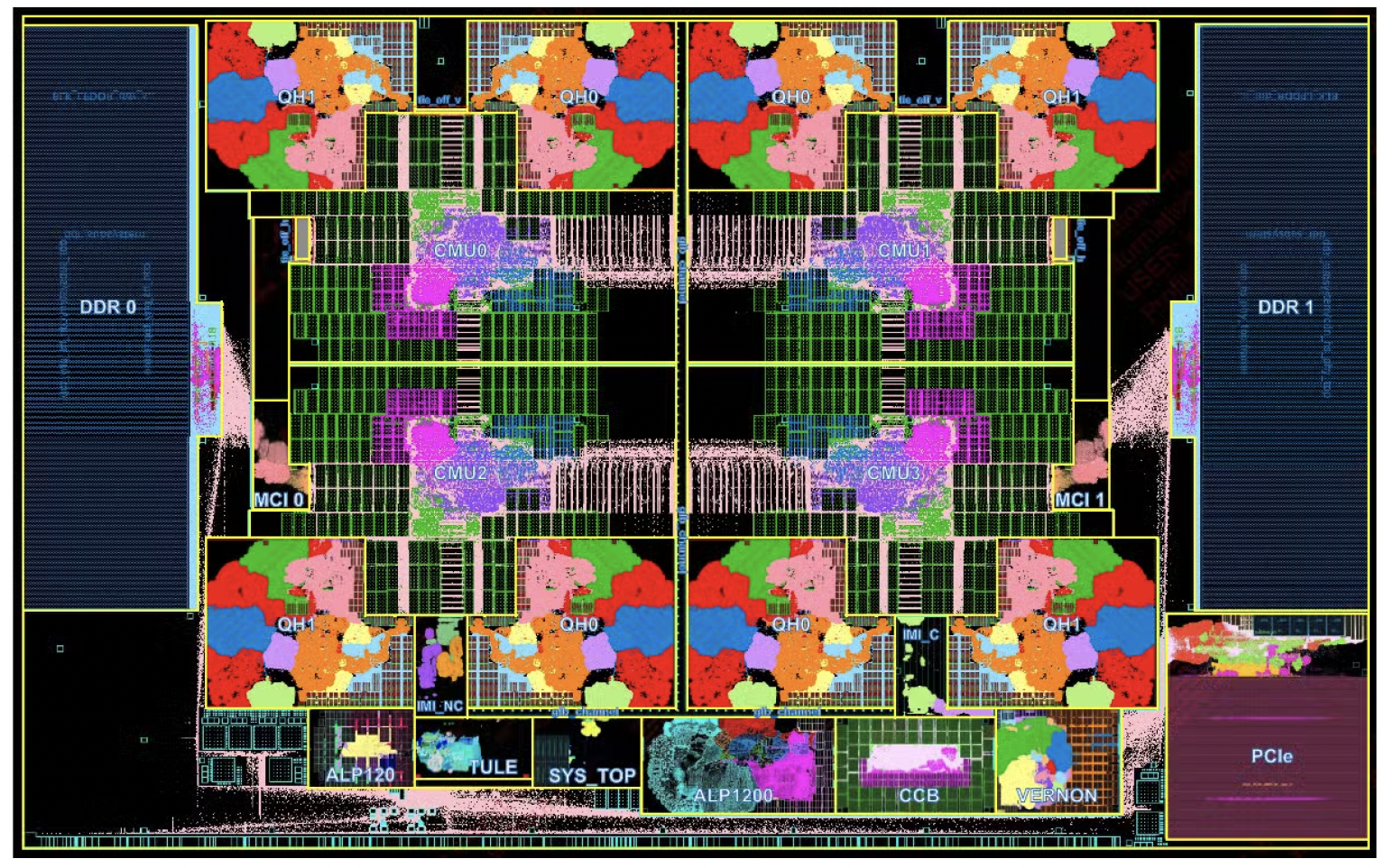

Rather than focusing solely on CPU IP, Akeana is building a system-level platform. Its Alpine test chip, taped out in December 2025, is a 4nm RVA23-compatible SoC designed for software development and validation.

Akeana was able to lean on its strong server class SoC pedigree to showcase the maturity and quality of its processor plus system IP portfolio by pulling together an SoC design and taping it out in a short amount of time.

Alpine integrates an eight-core cluster of 64-bit out-of-order (application) processors, additional control cores, a coherent mesh, full system IP, and high-performance IO including LPDDR5 and PCIe Gen5. Of note, the test chip also showcases a 4-way simultaneous multi-threaded and wide vector (512b) in-order core that is very commonly used in AI (xPU) chips.

Alpine Test Chip

The system has also been validated with a full software stack, including Linux; running prior to tape-out via emulation.

A defining feature is configurability: pipelines, vector units, and other parameters can be tuned for specific workloads, particularly in cloud and AI environments. This shifts hardware closer to a programmable solution.

The focus is clear: Ability to deploy, not just ability to design.

Performance Is Now Part of the Equation

Akeana’s 5100, 5200, and 5300 series target performance tiers aligned with modern high-performance CPUs, with benchmarking against publicly available silicon providing early validation.

Industry signals point in the same direction. Recent RISC-V summit keynotes highlight increasingly ambitious designs, including many-core systems targeting server-class workloads.

The intent is clear. Execution is the differentiator.

Why This Remains Difficult

High-performance silicon demands deep expertise, large-scale verification, advanced process nodes, tight hardware-software integration, and significant upfront investment. Even experienced teams take years to deliver production-ready systems.

The ecosystem of silicon, systems and software readiness for RISC-V appears large, but at the high-performance level, it is much smaller.

As a result, the number of credible players narrows quickly at the high end. This is not a limitation of RISC-V. It reflects the level of execution required.

Why Timing Matters

The urgency is increasing. AI agentic workloads are elevating the role of CPUs in orchestration and data movement. At the same time, companies are reassessing architectural dependencies, especially in light of shifts like Arm’s move into silicon.

With RVA23 in place, expectations for RISC-V have risen. The question is no longer whether it can work but rather whether it can deliver now.

Furthermore, the software lift that has often been stated as a barrier to entry for high performance RISC-V is simplified. An orchestration CPU for agentic workloads does not bear the same burden as a general-purpose Cloud/Enterprise CPU in terms of middleware and applications software porting/support required.

From Momentum to Delivery

RISC-V has crossed an important threshold: momentum, relevance, and demand are all in place.

What matters now is delivery. Who can move from roadmap to silicon, from silicon to systems, and from systems to deployment?

Summary

RISC-V’s moment has arrived. The next phase will not be defined by participation, but by execution. Defined by those who can translate momentum into real, high-performance platforms. Because in the end, adoption comes down to one thing: What can be built, shipped, and trusted.

Also Read:

Akeana Partners with Axiomise for Formal Verification of Its Super-Scalar RISC-V Cores

Demand Meets Design: RISC-V and the Next Wave of AI Hardware

CEO Interview with Rabin Sugumar of Akeana

Share this post via:

Comments

There are no comments yet.

You must register or log in to view/post comments.