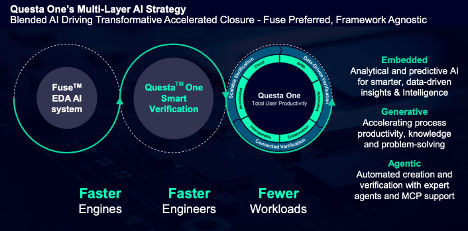

There’s no denying that verification now leads the field in agentic AI announcements, accelerating the trend around this significant contribution to design automation. Siemens have just announced their Questa One Agentic Toolkit, their response to this trend, building on the core Questa One platform. Questa One provides integrated simulation, static verification, embedded AI driving common management around those tools, also VIP in support of these functions. The Agentic Toolkit adds further automated creation and orchestration of verification tasks to provide end-to-end solutions. Boasting endorsements from NVIDIA and MediaTek among others, this update is worth a look.

Strategy and foundation

Announcements in this space are inevitably similar, so what makes the Siemens approach different? They are leading with a foundation of openness and organic development. Start with openness. The Agentic Toolkit provides MCP interfaces to underlying Questa One functions with the ability to connect to any agentic frameworks. Their own agentic app, Fuse, naturally fully utilizes these interfaces and is the “preferred” option but does not limit other frameworks from connecting.

Sidebar on this point: tool providers have a natural advantage in knowing how best to use their own tools. That expertise can be captured in vectorized knowledge embedded in agentic apps. But how well does this work in a mix-and-match flow? More should be debated here between design teams and verification technology providers.

The organic differentiation is also interesting. Siemens have built their Fuse EDA AI system in-house. Supported workflows leverage the NVIDIA Llama-Nemotron reasoning framework and NVIDIA NIM inference microservices, enabling the platform to understand verification state in real time and maintain comprehensive awareness and contextual intelligence relationships between designs, testbenches, test plans and specifications. No doubt thanks to these foundation frameworks this system apparently also works with main-stream AI coding applications, including GitHub Copilot, Claude Code, Cursor, and Cline, and can be used in command-line mode (for scripting) or through IDEs such as VS-Code.

All this works with a multi-model EDA data lake, capturing baseline manuals and user documentation. An LLM exploits this information in assistants, reasoners, etc., to direct run objectives and orchestration.

They also add that building on the existing connected ecosystem between Questa One, Tessent™ software for DFT and the Veloce™ CS hardware-assisted verification and validation system, the Agentic Toolkit supports a broad range of design and verification objectives.

This system ships today with several pre-built and tested agents for customers who need a quick start in pilot trials: an RTL code agent, a Lint agent, a CDC agent, a verification planning agent and a debug agent. Expanding a little on the values they describe, the Verification Agent organizes tasks, coverage goals, and requirements, offering AI-driven suggestions for efficient resource allocation, from which engineers gain clarity, adaptability, and accelerated closure, ensuring comprehensive verification and faster project success.

The Debug Agent accelerates root-cause analysis by pinpointing issues in RTL and testbenches with AI-driven insights. It offers targeted suggestions, automates error tracing, and guides engineers to efficient resolution. With smart diagnostics, it reduces debug cycles and boosts productivity, helping teams deliver robust designs faster.

What about guardrails and trust?

This a question I am now asking all agentic verification solution providers. The upside of hands-free automation is huge; the downside of unsupervised AI could be even more dramatic. I asked Abhi Kolpekwar (Sr. VP and GM at Siemens Digital Industries) for his views on this challenging balance.

Abhi agreed that while across all industries there is buzz around the potential of AI and agentic methods, there is equally buzz around hype outrunning reality and most pilot programs failing to translate to production. How do successful deployments navigate this challenge? Abhi had a two-part answer. First, while those surveys certainly highlight a problem, we shouldn’t underestimate the success AI/Agentic already enjoys in quietly successful embedded use-cases. Examples include cars (I have written recently about this), in our phones, and in factory automation. Another interesting example is a method to detect what you are saying by watching your facial muscles, even without needing to hear clear speech. Not available yet but presumably coming to a phone, car or other device near you in the not-too-distant future.

Intriguing, but in SoC and system we are very sensitive to reliability and pinpoint accuracy. How can AI/Agentic align with these needs? In Abhi’s view, one way to secure that level of quality is through guardrails implemented using proven and non-AI core EDA technologies: formal methods, simulation, and so on. The second way is to implement processes which require human-in-the-loop judgement at checkpoints. There can still be a big win, even though the whole process isn’t pushbutton. With agentic support, DV engineers graduate from being tool operators, knowing all minutiae of how to run (and debug) scripts and tools, to instead becoming verification scientists, knowing how to judge outcomes at intermediate steps, and what high-level correction they might want to try to correct an outcome.

I like it – what DV engineer wouldn’t want to upgrade their day-to-day workload to become a verification scientist?

Nice positioning. Still, the proof will be in how DV engineers and product managers will react in practice. You can read more about the release HERE and get more insight on product details HERE.

Also Read:

Functional Safety Analysis of Electronic Systems

Perforce and Siemens Collaborate on 3DIC Design at the Chiplet Summit

Siemens to Deliver Industry-Leading PCB Test Engineering Solutions

Share this post via:

Comments

There are no comments yet.

You must register or log in to view/post comments.