Samsung shares rise after Nvidia's Huang flags tie-up on new AI chips

By Hyunjoo JinMarch 16, 20265:20 PM CDTUpdated 12 hours ago

A Samsung Electronics logo and a computer motherboard appear in this illustration taken August 25, 2025. REUTERS/Dado Ruvic/Illustration Purchase Licensing Rights, opens new tab

March 17 (Reuters) - Shares of Samsung Electronics (005930.KS), opens new tab rose as much as 5% on Tuesday after Nvidia (NVDA.O), opens new tab CEO Jensen Huang said the South Korean company was producing Nvidia's new artificial intelligence chips.

The news fuelled expectations that Samsung's foundry division, which makes logic chips for customers including Tesla (TSLA.O), opens new tab , Apple (AAPL.O), opens new tab and Samsung's phone division, may be able to turn around as early as next year after posting billions of dollars in annual losses in recent years, analysts said.

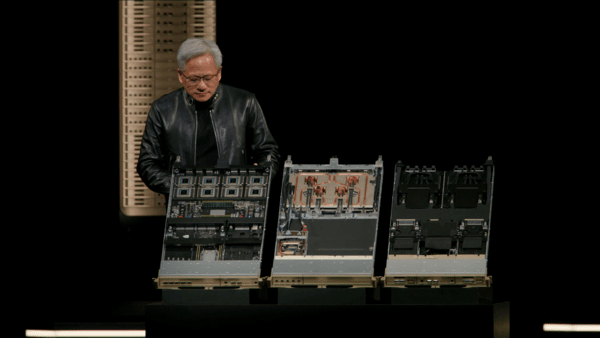

At Nvidia's GTC developer conference in California on Monday, Huang unveiled Nvidia's new AI inference processor based on technology from chip startup Groq.

"I want to thank Samsung who manufactures the Groq LP30 chip for us and they're cranking as hard as they can," he said, adding the chips were in production, and would be shipped in the second half of this year.

Samsung also showcased the Nvidia chips made using its 4-nanometer manufacturing process at the GTC.

Samsung shares were up 4.3% at 196,800 won as of 0252 GMT, after earlier reaching 198,000 won. The broader market (.KS11), opens new tab was up 2.7%.

Sohn In-joon, an analyst at Heungkuk Securities, expected Samsung's foundry business would be able to reach breakeven later next year. But he said weak demand from mobile phones stemming from surging memory chip prices could weigh on foundry earnings.

Advanced Micro Devices' (AMD.O), opens new tab CEO Lisa Su will meet Samsung Electronics Chairman Jay Y. Lee in South Korea on Wednesday, media reports said, with eyes on whether the two would discuss cooperation in memory chips and logic semiconductors.

Samsung shares rise after Nvidia's Huang flags tie-up on new AI chips

Shares of Samsung Electronics rose as much as 5% on Tuesday after Nvidia CEO Jensen Huang said the South Korean company was producing Nvidia's new artificial intelligence chips.