user nl

Well-known member

712,805 views Apr 15, 2026 Dwarkesh Podcast

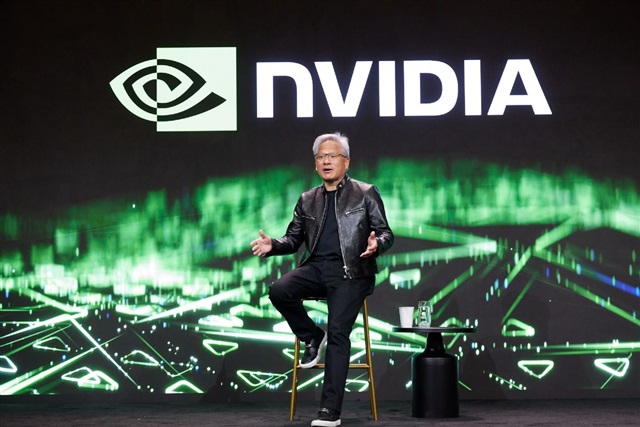

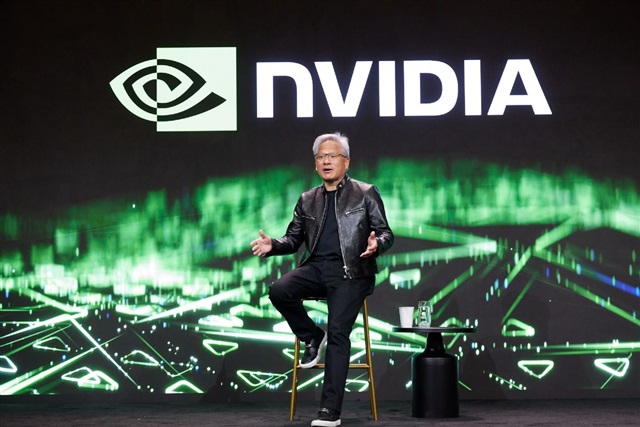

I asked Jensen about TPU competition, Nvidia’s lock on the ever more bottlenecked supply chain needed to make advanced chips, whether we should be selling AI chips to China, why Nvidia doesn’t just become a hyperscaler, how it makes its investments, and much more. Enjoy!+𝐄𝐏𝐈𝐒𝐎𝐃𝐄 𝐋𝐈𝐍𝐊𝐒

𝐓𝐈𝐌𝐄𝐒𝐓𝐀𝐌𝐏𝐒

00:00:00 – Is Nvidia’s biggest moat its grip on scarce supply chains?

00:16:25 – Will TPUs break Nvidia’s hold on AI compute?

00:41:06 – Why doesn’t Nvidia become a hyperscaler?

00:57:36 – Should we be selling AI chips to China?

01:35:06 – Why doesn’t Nvidia make multiple different chip architectures?

----------------------------------------------------------------------------------------------------------------------------------------------------------------------------------

Nvidia CEO Jensen Huang clarified in an April 2026 interview with Silicon Valley podcast host Dwarkesh Patel that the company allocates GPUs based on a first-come, first-served principle rather than a highest bidder wins approach.

Huang explained that Nvidia prioritizes GPU distribution by evaluating customers' demand forecasts and purchase orders (POs), then considers whether data center infrastructure is ready before allocating units according to order timing. Customers without completed infrastructure may receive lower priority to maximize overall production efficiency.

Addressing rumors of price-based allocation, Huang firmly denied any such practice, stating Nvidia's quoted prices are final and do not increase due to rising demand.

He stated that the company aims to be a reliable foundational supplier for the industry, capable of fulfilling massive AI infrastructure orders with stable commitments.

I asked Jensen about TPU competition, Nvidia’s lock on the ever more bottlenecked supply chain needed to make advanced chips, whether we should be selling AI chips to China, why Nvidia doesn’t just become a hyperscaler, how it makes its investments, and much more. Enjoy!+𝐄𝐏𝐈𝐒𝐎𝐃𝐄 𝐋𝐈𝐍𝐊𝐒

- Transcript: https://www.dwarkesh.com/p/jensen-huang

- Apple Podcasts: https://podcasts.apple.com/us/podcast...

- Spotify: https://open.spotify.com/episode/1viB...

- Crusoe's cloud runs on state-of-the-art Blackwell GPUs, with Vera Rubin deployment scheduled for later this year. But hardware is only part of the story—for inference, Crusoe's MemoryAlloy tech implements a cluster-wide KV cache, delivering up to 10x faster TTFT and 5x better throughput than vLLM. Learn more at https://crusoe.ai/dwarkesh

- Cursor helped me build an AI co-researcher over the course of a weekend. Now I have an AI agent that I can collaborate with in Google Docs via inline comment threads! And while other agentic coding tools feel like a total black-box, Cursor let me stay on top of the full implementation. You can try my co-researcher out at https://github.com/dwarkeshsp/ai_cowo..., or get started on your own Cursor project today at https://cursor.com/dwarkesh

- Jane Street spent ~20,000 GPU hours training backdoors into 3 different language models, then challenged my audience to find the triggers. They received some clever solutions—like comparing the base and fine-tuned versions and extrapolating any differences to reveal the hidden backdoor—but no one was able to solve all 3. So if open problems like this excite you, Jane Street is hiring. Learn more at https://janestreet.com/dwarkesh

𝐓𝐈𝐌𝐄𝐒𝐓𝐀𝐌𝐏𝐒

00:00:00 – Is Nvidia’s biggest moat its grip on scarce supply chains?

00:16:25 – Will TPUs break Nvidia’s hold on AI compute?

00:41:06 – Why doesn’t Nvidia become a hyperscaler?

00:57:36 – Should we be selling AI chips to China?

01:35:06 – Why doesn’t Nvidia make multiple different chip architectures?

----------------------------------------------------------------------------------------------------------------------------------------------------------------------------------

Nvidia CEO Jensen Huang clarified in an April 2026 interview with Silicon Valley podcast host Dwarkesh Patel that the company allocates GPUs based on a first-come, first-served principle rather than a highest bidder wins approach.

Huang explained that Nvidia prioritizes GPU distribution by evaluating customers' demand forecasts and purchase orders (POs), then considers whether data center infrastructure is ready before allocating units according to order timing. Customers without completed infrastructure may receive lower priority to maximize overall production efficiency.

Addressing rumors of price-based allocation, Huang firmly denied any such practice, stating Nvidia's quoted prices are final and do not increase due to rising demand.

He stated that the company aims to be a reliable foundational supplier for the industry, capable of fulfilling massive AI infrastructure orders with stable commitments.

Nvidia says GPU allocation follows first-come, first-served principle, not highest bidder

Nvidia CEO Jensen Huang clarified in an April 2026 interview with Silicon Valley podcast host Dwarkesh Patel that the company allocates GPUs based on a first-come, first-served principle rather than a highest bidder wins approach.

www.digitimes.com