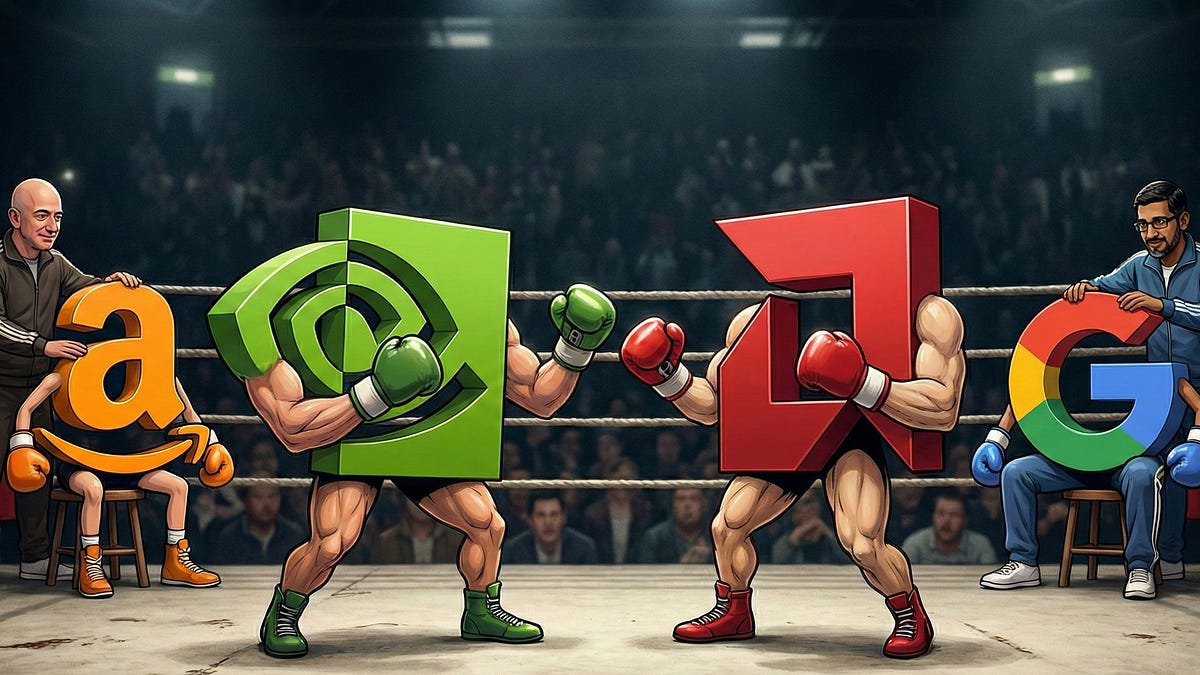

Do Amazon's chips have any fundamental improvements that AMD, Nvidia, Intel, or Qualcomm don't already offer, or is this more of "we have chips available to buy"?

OK - claimed benefits via Perplexity, with associated caveats (below):

Amazon’s in-house chips are mainly claimed to excel on price-performance, throughput, latency, memory bandwidth, and energy efficiency versus general-purpose GPUs. The strongest claims are for AWS Inferentia and Trainium: lower inference cost, higher throughput, and better performance per watt for AI workloads.[247wallst +1]

Claimed strengths (mostly compared to earlier Amazon chips / no direct references to merchant market competitors)

• Lower cost for AI inference and training. AWS says Inferentia2 delivers up to 4x higher throughput and up to 10x lower latency than Inferentia, while Inf1 instances can provide up to 70% lower cost per inference than comparable EC2 instances.[aws.amazon]

• Better price-performance than GPUs. Amazon has said its Trainium-based systems can cut AI training and inference costs by up to half versus comparable GPU setups, framing custom silicon as a cost-leadership play.[247wallst]

• Higher throughput and memory capacity. AWS says Inferentia2 has 32 GB of HBM per chip, 4x the memory of Inferentia, and 10x the memory bandwidth, which helps with larger and more complex models.[aws.amazon]

• Scale-out inference support. Inf2 instances are described as the first inference-optimized EC2 instances with ultra-high-speed chip-to-chip connectivity for distributed inference.[aws.amazon]

• Framework compatibility. AWS says Neuron integrates natively with PyTorch and TensorFlow, so customers can use existing workflows with fewer code changes.[aws.amazon]

• Energy efficiency. AWS claims Inf2 instances offer up to 50% better performance per watt than comparable EC2 instances.[aws.amazon]

Important caveat

These are Amazon’s own claims, and some outside reporting says the chips still trail Nvidia in certain areas, especially latency and software maturity. So the core technical story is not “best overall chip,” but rather “optimized for AWS workloads at lower cost, with improving performance generation by generation”.

Real Benchmark Results

I’m personally waiting to see how Amazon Trainium does on InferenceX data center scale inference benchmarks to see if any of their claims are anywhere near true in a third-party assessment. Per a couple sources, Amazon is signed up to do the InferenceX work with SemiAnalysis, but haven’t produced any real results yet vis a vis existing NVIDIA and AMD comparisons. The longer it takes, the less likely it is that they have a competitive product at the rack level.

The Artist Known as InferenceMAX. GB300 NVL72, MI355X, B200, H100, Disaggregated Serving, Wide Expert Parallelism, Large Mixture of Experts, SGLang, vLLM, TRTLLM

newsletter.semianalysis.com

That Amazon has paired with Cerebras on disaggregated inference also tells me that their chips aren't particularly good on decode when compared to other new solutions.