user nl

Well-known member

Very informative paper with many details on Groq 3 LPX and the relation with (agentic) AI; many nice graphs that Jensen Huang also showed to some extent in the keynote:

https://developer.nvidia.com/blog/i...celerator-for-the-nvidia-vera-rubin-platform/

The system is built around 256 interconnected NVIDIA Groq 3 LPU accelerators. Its architecture emphasizes deterministic execution, high on-chip SRAM bandwidth, and tightly coordinated scale-up communication so interactive inference can stay responsive even as concurrency rises and request shapes vary.

Deployed alongside Vera Rubin NVL72, LPX accelerates the latency-sensitive portions of the decode loop, including FFN and MoE expert execution, while Rubin GPUs continue to handle prefill and decode attention. Together, they deliver a heterogeneous serving path that improves interactive responsiveness without sacrificing AI factory throughput.

https://developer.nvidia.com/blog/i...celerator-for-the-nvidia-vera-rubin-platform/

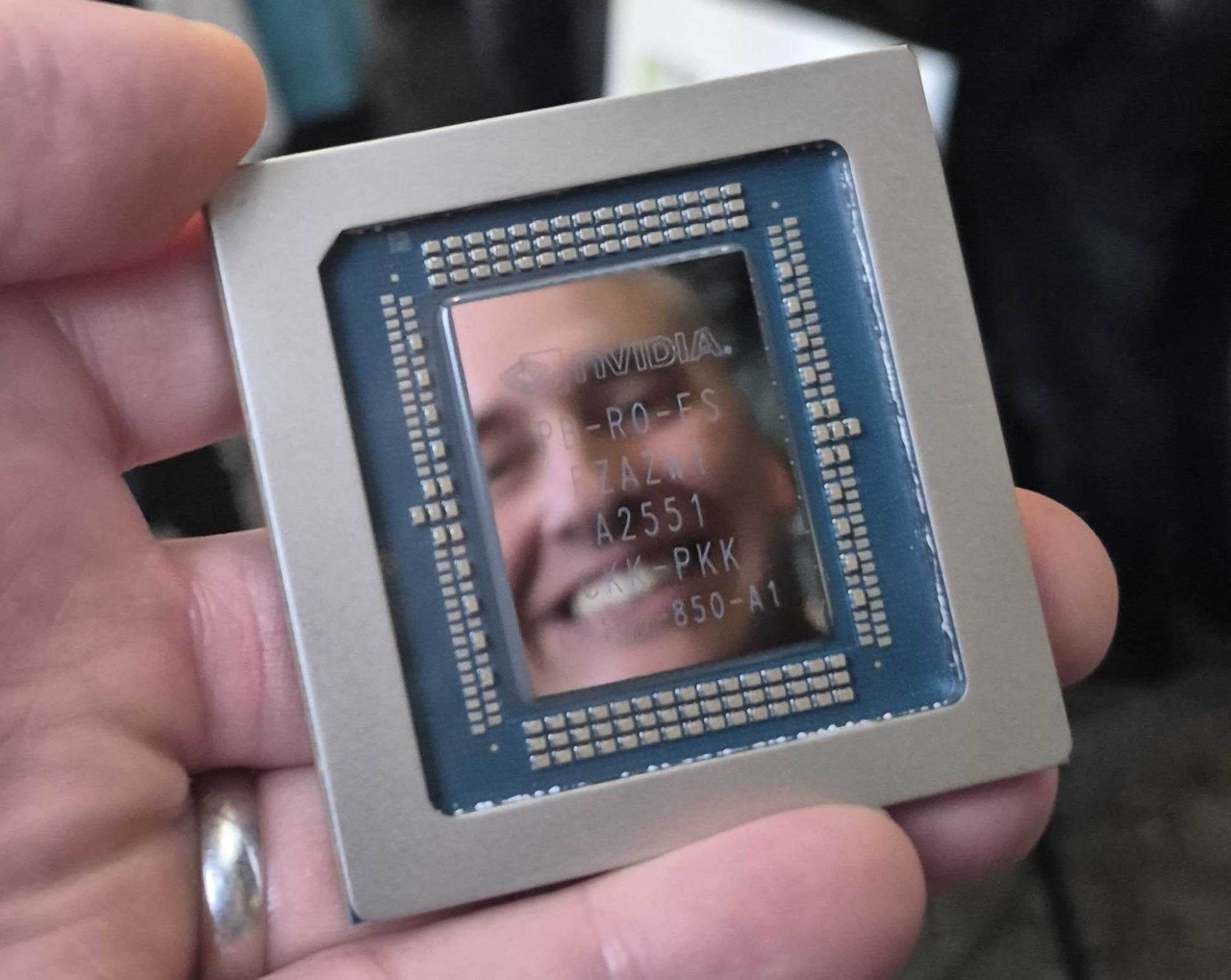

Introducing NVIDIA Groq 3 LPX

Vera Rubin and LPX unite the extreme performance of Rubin GPUs and LPUs to deliver up to 35x higher inference throughput per megawatt and up to 10x more revenue opportunity for trillion-parameter models. Integrated with the NVIDIA MGX ETL rack architecture and aligned with the broader Vera Rubin platform, LPX gives data centers a way to deploy a dedicated low-latency inference path alongside Vera Rubin NVL72 within a common infrastructure design.The system is built around 256 interconnected NVIDIA Groq 3 LPU accelerators. Its architecture emphasizes deterministic execution, high on-chip SRAM bandwidth, and tightly coordinated scale-up communication so interactive inference can stay responsive even as concurrency rises and request shapes vary.

Deployed alongside Vera Rubin NVL72, LPX accelerates the latency-sensitive portions of the decode loop, including FFN and MoE expert execution, while Rubin GPUs continue to handle prefill and decode attention. Together, they deliver a heterogeneous serving path that improves interactive responsiveness without sacrificing AI factory throughput.

Last edited: