Recently we have been swamped by news of Artificial Intelligence applications in hardware and software by the increased adoption of Machine Learning (ML) and the shift of electronic industry towards IoT and automobiles. While plenty of discussions have covered the progress of embedded intelligence in product roll-outs, an increased focus on applying more intelligence into the EDA world is required.

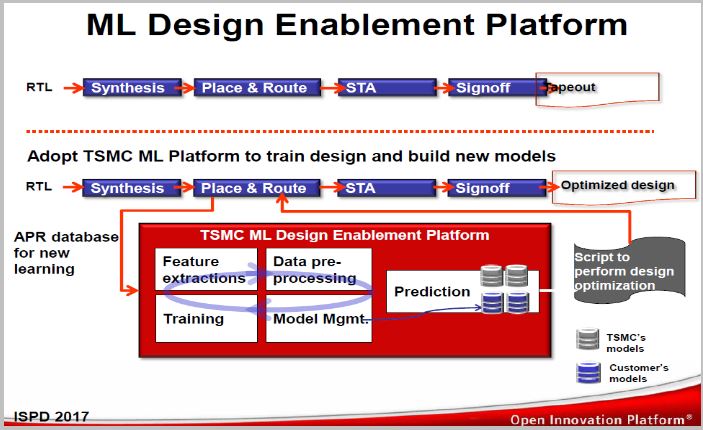

Earlier this year TSMC reported successful initial deployment of machine learning on ARM A72/73 cores in which it helps predict an optimal cell clock-gating to gain overall chip speeds of 50 – 150 MHz. The techniques include training models using open source algorithms maintained by TSMC.

In ISPD 2017,TSMC referred to this platform as the ML Design Enablement Platform. It was anticipated to allow designers to create custom scripts to cover other designs.

During the 2017 CASPA Annual Conference, Cadence Distinguished Engineer, David White shared his thoughts on the current challenges faced by the EDA world which consists of 3 factors:

- Scale – with increasing design sizes, more rules/restrictions and massive data such as simulation, extraction, polygons, technology files are expected.

- Complexity– more complex FinFET process technologies resulting in complicated DRC/ERC, while pervasive interactions between chip and packaging/ board becoming the norm. On the other hand thermal physical effect between devices and wires is needing attention.

- Productivity– introduce uncertainty and more iterations while limited retrained design and physical engineers.

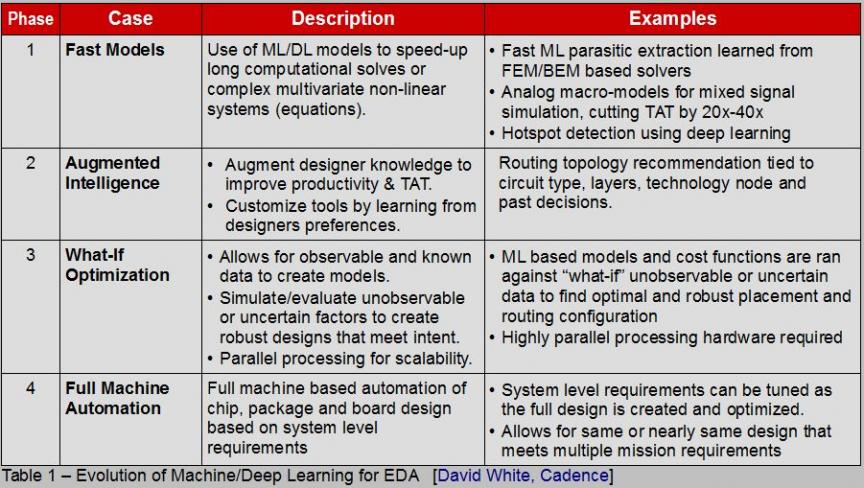

Furthermore, David categorized the pace of ML (or Deep Learning) adoption into 4 phases:

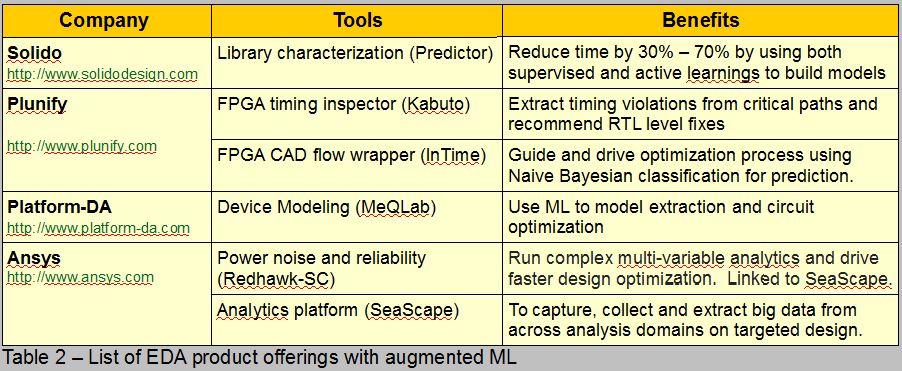

Although the EDA industry has started embracing ML as a new venue to enhance their solutions this year, the question is: How far have we gone? During 2017 Austin DAC, several companies announced augmenting ML in their product offerings as shown in table 2.

You might have heard the famous quote, “War is 90% information“. ML adoption may require good data analytics as one is faced with paramount data size to handle. For most hardware products augmenting ML can be either done on the edge (gateway) or in clouds. With respect to the EDA tools, it also becomes a question of how massive and accurate the trained models need to be and whether it requires many iterations.

For example, predicting the inclusion of via pillar in a FinFET process node could be done at a different stage of design implementation while the model accuracy should be validated at post-route. Injecting them during placement would be different than in physical synthesis where there is still no concept of legalized design and projected track usage.

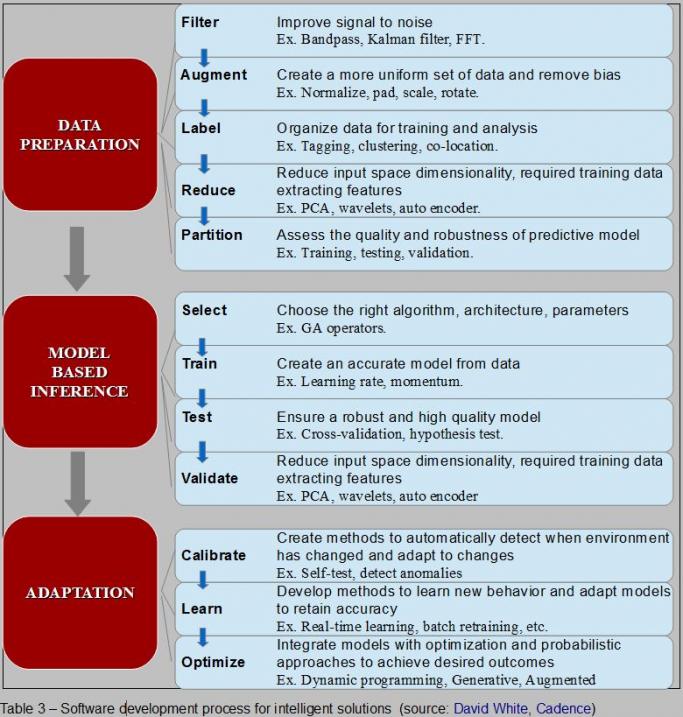

Let’s revisit David’s presentation and find out what steps are required to design and develop intelligent solutions which involve harnessing ML, analytics and clouds, coupled with prevailing optimizations. He believes it’s comprised of two phases: training development phaseand operational phase.Each implies certain context as shown in the following snapshot (training = data preparation + model based inference; operational = adaptation).

The takeaways from David’s formulation involve properly managing data preparation to reduce its size prior to generating, training, and validating the model. Once completed, the calibration and integration to the underlying optimization or process can take place. He believes that we are just starting phase 2 in augmenting ML into EDA (refer to table 1).

Considering the increased attention given to ML during 2017 TSMC Open Innovation Platform, in which TSMC explored the use of ML to apply path-grouping during P&R to improve timing and Synopsys MLadoption to predict potential DRC hotspots, we are on the right track to have smarter solutions to balance the complexity challenges to high density and finer process technology.

Comments

There are no comments yet.

You must register or log in to view/post comments.